import sys

import petsc4py

# Tell PETSc to ignore harmless system signals and let Python handle them

petsc4py.init(sys.argv + ['-no_signal_handler'])

import numpy as np

import pandas as pd

import matplotlib.pyplot as pl

from matplotlib import rcParams

import scanpy as sc

import scipy

import numpy as np

import matplotlib.pyplot as plt

import warnings

import cellrank as cr

import scvelo as scv

warnings.simplefilter(action="ignore", category=Warning)

# verbosity: errors (0), warnings (1), info (2), hints (3)

sc.settings.verbosity = 3

sc.settings.set_figure_params(dpi=100, frameon=False, figsize=(5, 5), facecolor='white', color_map = 'viridis_r') Code chunks run Python commands unless it starts with %%bash, in which case, those chunks run shell commands.

Partly following this PAGA tutorial with some modifications.

1 Loading libraries

2 Preparing data

In order to speed up the computations during the exercises, we will be using a subset of a bone marrow dataset (originally containing about 100K cells). The bone marrow is the source of adult immune cells, and contains virtually all differentiation stages of cell from the immune system which later circulate in the blood to all other organs.

If you have been using the Seurat, Bioconductor or Scanpy toolkits with your own data, you need to reach to the point where can find get:

- A dimensionality reduction where to perform the trajectory (for example: PCA, ICA, MNN, harmony, Diffusion Maps, UMAP)

- The cell clustering information (for example: from Louvain, k-means)

- A KNN/SNN graph (this is useful to inspect and sanity-check your trajectories)

In this case, all the data has been preprocessed with Seurat with standard pipelines. In addition there was some manual filtering done to remove clusters that are disconnected and cells that are hard to cluster, which can be seen in this script

The file trajectory_scanpy_filtered.h5ad was converted from the Seurat object using the SeuratDisk package. For more information on how it was done, have a look at the script: convert_to_h5ad.R in the github repo.

You can download the data with the commands:

import os

import subprocess

# download pre-computed data if missing or long compute

fetch_data = True

# url for source and intermediate data

path_data = "https://nextcloud.dc.scilifelab.se/public.php/webdav"

curl_upass = "zbC5fr2LbEZ9rSE:scRNAseq2025"

path_results = "data/trajectory"

if not os.path.exists(path_results):

os.makedirs(path_results, exist_ok=True)

path_file = "data/trajectory/trajectory_seurat_filtered.h5ad"

if not os.path.exists(path_file):

file_url = os.path.join(path_data, "trajectory/trajectory_seurat_filtered.h5ad")

subprocess.call(["curl", "-u", curl_upass, "-o", path_file, file_url ]) 3 Reading data

We already have pre-computed and subsetted the dataset (with 6688 cells and 3585 genes) following the analysis steps in this course. We then saved the objects, so you can use common tools to open and start to work with them (either in R or Python).

adata = sc.read_h5ad("data/trajectory/trajectory_seurat_filtered.h5ad")

adata.var| features | |

|---|---|

| 0610040J01Rik | 0610040J01Rik |

| 1190007I07Rik | 1190007I07Rik |

| 1500009L16Rik | 1500009L16Rik |

| 1700012B09Rik | 1700012B09Rik |

| 1700020L24Rik | 1700020L24Rik |

| ... | ... |

| Sqor | Sqor |

| Sting1 | Sting1 |

| Tent5a | Tent5a |

| Tlcd4 | Tlcd4 |

| Znrd2 | Znrd2 |

3585 rows × 1 columns

# check what you have in the X matrix, should be lognormalized counts.

print(adata.X[:10,:10])<Compressed Sparse Row sparse matrix of dtype 'float64'

with 25 stored elements and shape (10, 10)>

Coords Values

(0, 4) 0.11622072805743532

(0, 8) 0.4800893970571722

(1, 8) 0.2478910541698065

(1, 9) 0.17188973970230348

(2, 1) 0.09413397843954842

(2, 7) 0.18016412971724202

(3, 1) 0.08438841021254412

(3, 4) 0.08438841021254412

(3, 7) 0.08438841021254412

(3, 8) 0.3648216463668793

(4, 1) 0.14198147850903975

(4, 8) 0.14198147850903975

(5, 1) 0.17953169693896723

(5, 8) 0.17953169693896723

(5, 9) 0.17953169693896723

(6, 4) 0.2319546390006887

(6, 8) 0.42010741700351195

(7, 1) 0.1775659421407816

(7, 8) 0.39593115482156394

(7, 9) 0.09271901219711086

(8, 1) 0.12089079757716388

(8, 8) 0.22873058755480363

(9, 1) 0.08915380247493314

(9, 4) 0.08915380247493314

(9, 8) 0.382703987185901044 Explore the data

There is a umap and clusters provided with the object, first plot some information from the previous analysis onto the umap.

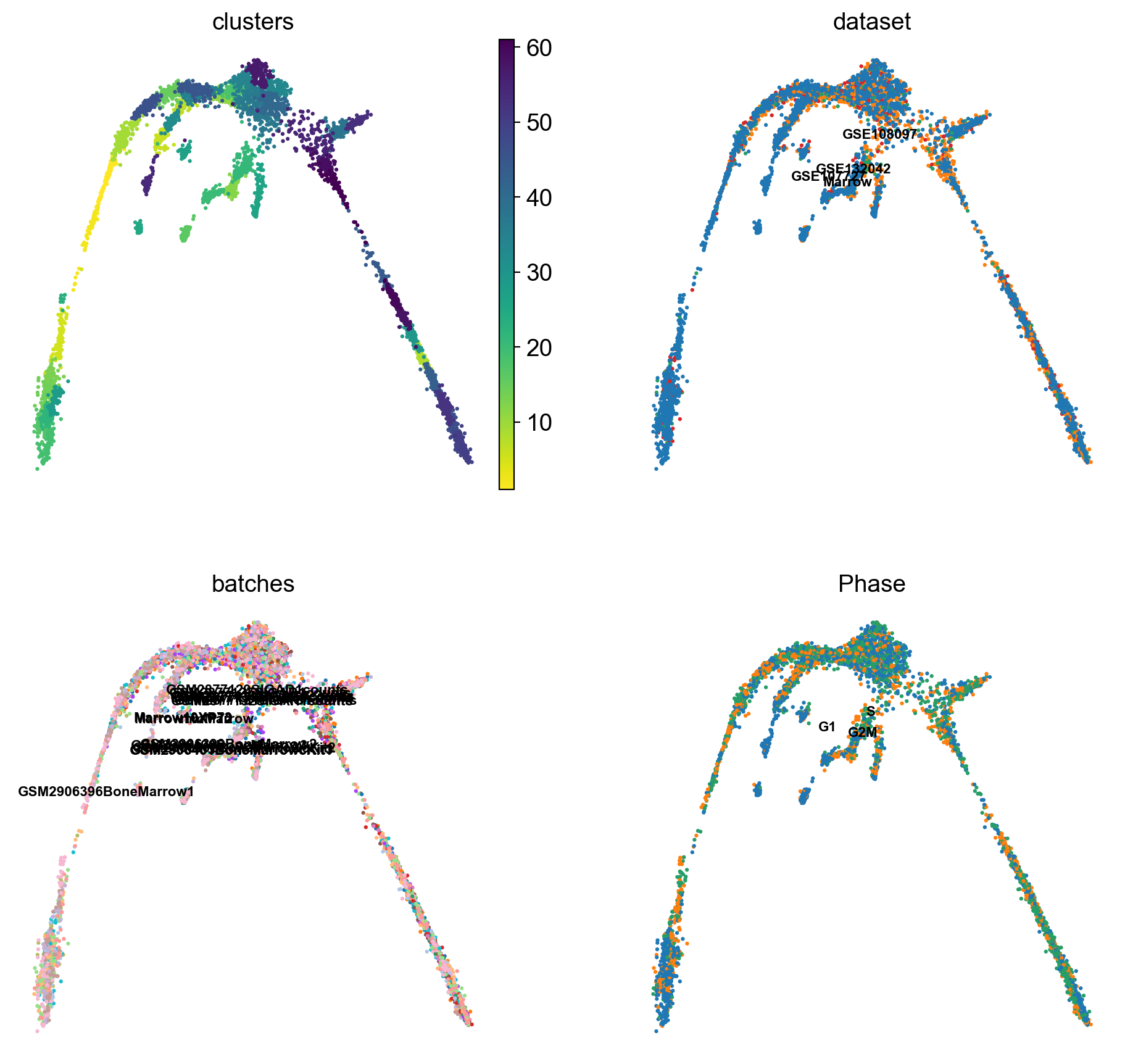

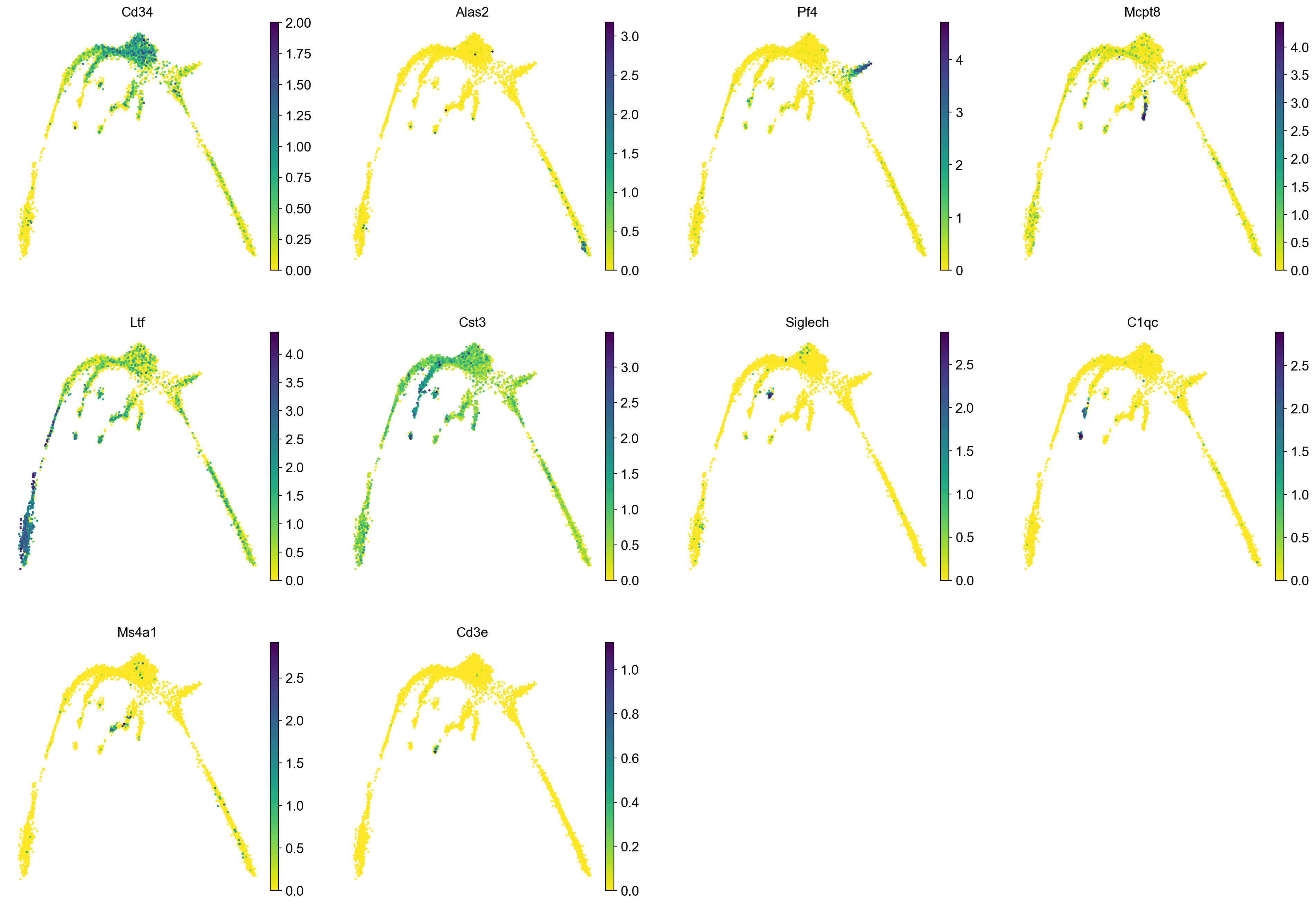

sc.pl.umap(adata, color = ['clusters','dataset','batches','Phase'],legend_loc = 'on data', legend_fontsize = 'xx-small', ncols = 2)It is crucial that you performing analysis of a dataset understands what is going on, what are the clusters you see in your data and most importantly How are the clusters related to each other?. Well, let’s explore the data a bit. With the help of this table, write down which cluster numbers in your dataset express these key markers.

| Marker | Cell Type |

|---|---|

| Cd34 | HSC progenitor |

| Ms4a1 | B cell lineage |

| Cd3e | T cell lineage |

| Ltf | Granulocyte lineage |

| Cst3 | Monocyte lineage |

| Cpa3 | Mast Cell/Basophil lineage |

| Alas2 | RBC lineage |

| Siglech | Dendritic cell lineage |

| C1qc | Macrophage cell lineage |

| Pf4 | Megakaryocyte cell lineage |

5 Rerun analysis in Scanpy

Redo clustering and umap using the basic Scanpy pipeline. Use the provided “X_harmony_Phase” dimensionality reduction as the starting point.

#calculate highly variable genes to get mean expression and dispersion for every gene - we will need this later

sc.pp.highly_variable_genes(adata, n_top_genes=2000)

# first, store the old umap with a new name so it is not overwritten

adata.obsm['X_umap_old'] = adata.obsm['X_umap']

sc.pp.neighbors(adata, n_pcs = 30, n_neighbors = 20, use_rep="X_harmony_Phase", random_state=159)

sc.tl.umap(adata, min_dist=0.4, spread=3, random_state=331)extracting highly variable genes

finished (0:00:01)

--> added

'highly_variable', boolean vector (adata.var)

'means', float vector (adata.var)

'dispersions', float vector (adata.var)

'dispersions_norm', float vector (adata.var)

computing neighbors

finished: added to `.uns['neighbors']`

`.obsp['distances']`, distances for each pair of neighbors

`.obsp['connectivities']`, weighted adjacency matrix (0:00:03)

computing UMAP

finished: added

'X_umap', UMAP coordinates (adata.obsm)

'umap', UMAP parameters (adata.uns) (0:00:12)sc.pl.umap(adata, color = ['clusters'],legend_loc = 'on data', legend_fontsize = 'xx-small', edges = True)

sc.pl.umap(adata, color = markers, use_raw = False, ncols = 4)

# Redo clustering as well

sc.tl.leiden(adata, key_added = "leiden_1.0", resolution = 1.0, random_state=137) # default resolution is 1.0

sc.tl.leiden(adata, key_added = "leiden_1.2", resolution = 1.2, random_state=137) # default resolution is 1.0

sc.tl.leiden(adata, key_added = "leiden_1.4", resolution = 1.4, random_state=137) # default resolution is 1.0

#sc.tl.louvain(adata, key_added = "leiden_1.0") # default resolution in 1.0

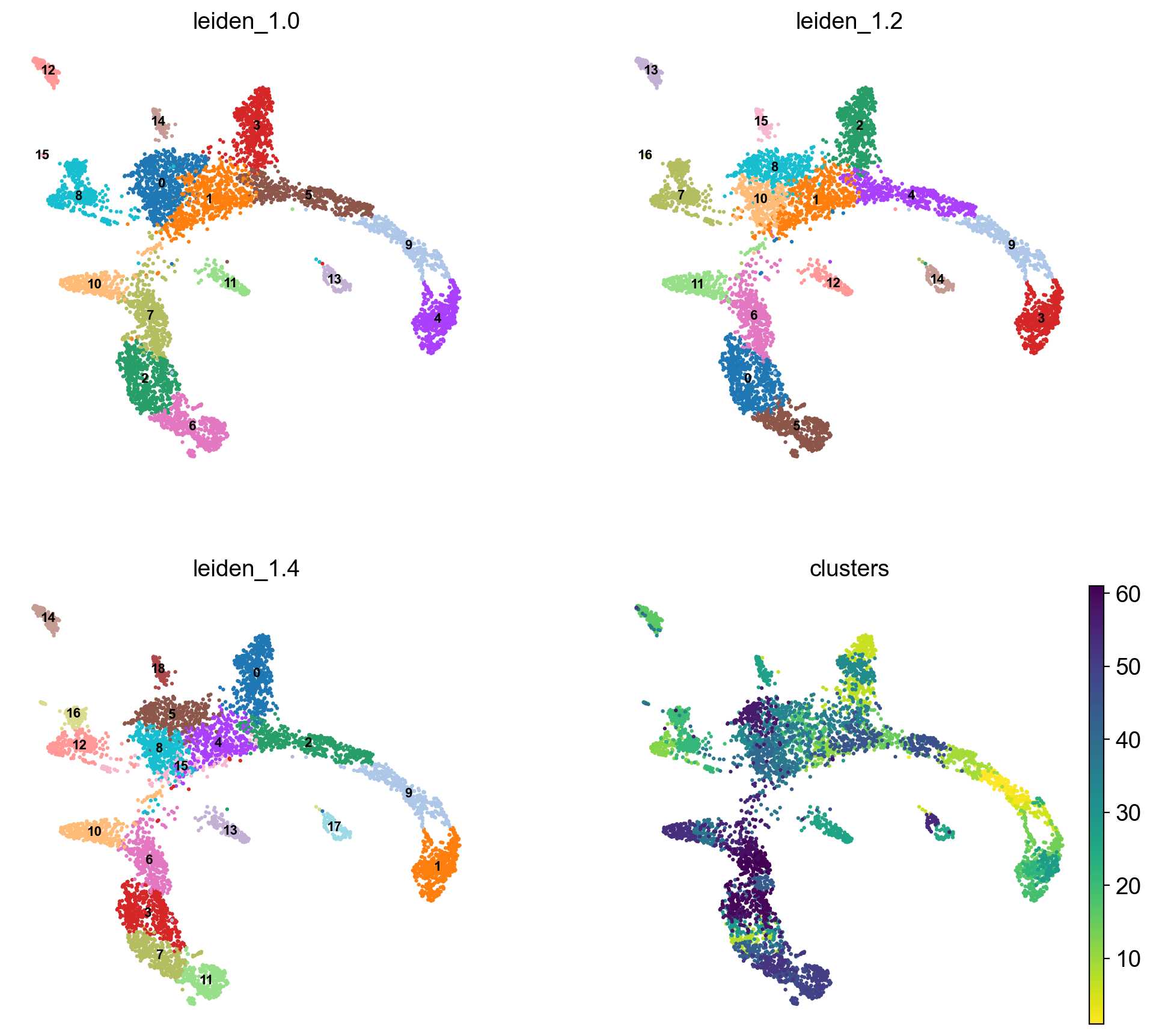

sc.pl.umap(adata, color = ['leiden_1.0', 'leiden_1.2', 'leiden_1.4','clusters'],legend_loc = 'on data', legend_fontsize = 'xx-small', ncols =2)running Leiden clustering

finished: found 14 clusters and added

'leiden_1.0', the cluster labels (adata.obs, categorical) (0:00:00)

running Leiden clustering

finished: found 16 clusters and added

'leiden_1.2', the cluster labels (adata.obs, categorical) (0:00:00)

running Leiden clustering

finished: found 18 clusters and added

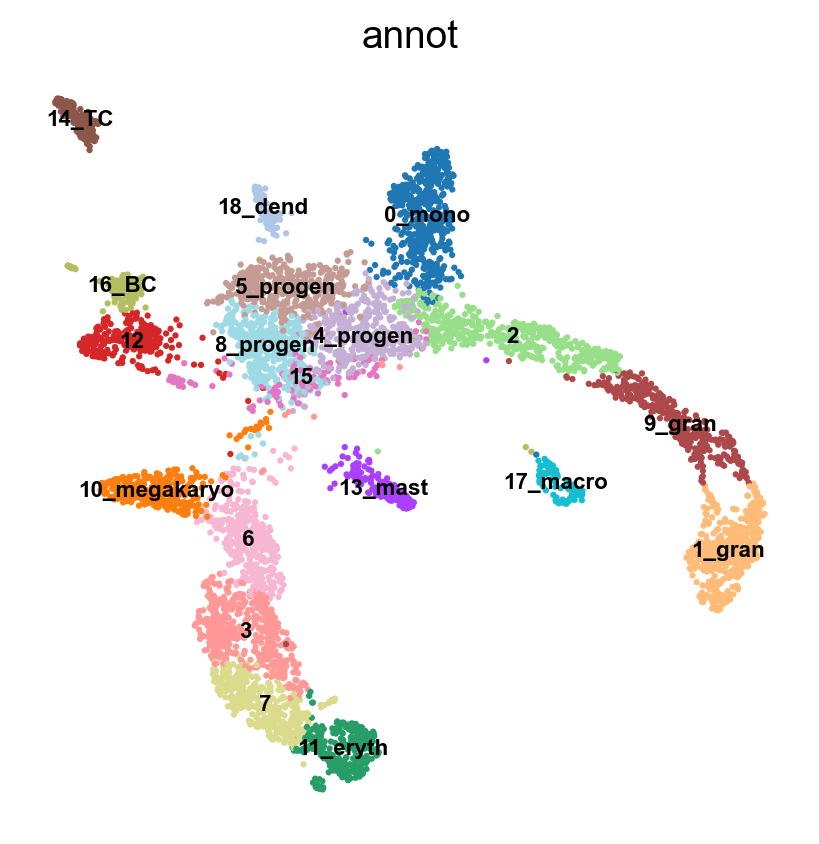

'leiden_1.4', the cluster labels (adata.obs, categorical) (0:00:00)#Rename clusters with really clear markers, the rest are left unlabelled.

annot = pd.DataFrame(adata.obs['leiden_1.4'].astype('string'))

annot[annot['leiden_1.4'] == '10'] = '10_megakaryo' #Pf4

annot[annot['leiden_1.4'] == '16'] = '16_macro' #C1qc

annot[annot['leiden_1.4'] == '12'] = '12_eryth' #Alas2

annot[annot['leiden_1.4'] == '17'] = '17_dend' #Siglech

annot[annot['leiden_1.4'] == '13'] = '13_mast_baso' #Cpa3

annot[annot['leiden_1.4'] == '5'] = '5_mono' #Cts3

annot[annot['leiden_1.4'] == '2'] = '2_gran' #Ltf

annot[annot['leiden_1.4'] == '14'] = '14_TC' #Cd3e

annot[annot['leiden_1.4'] == '15'] = '15_BC' #Ms4a1

annot[annot['leiden_1.4'] == '0'] = '0_progen' # Cd34

annot[annot['leiden_1.4'] == '9'] = '9_progen'

annot[annot['leiden_1.4'] == '7'] = '7_progen'

adata.obs['annot']=annot['leiden_1.4'].astype('category')

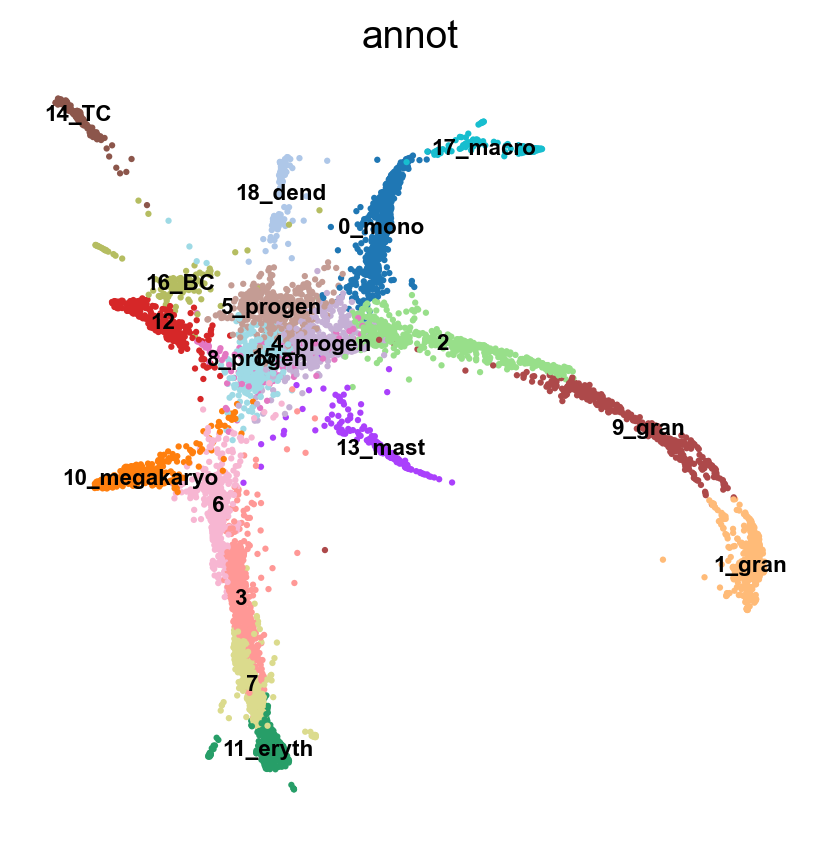

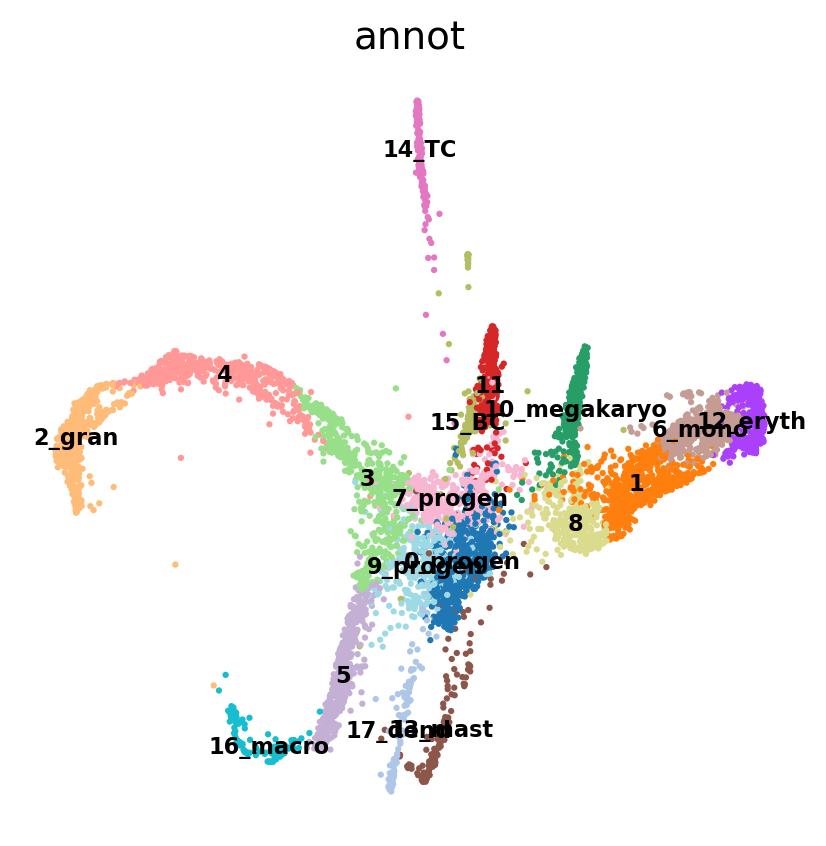

sc.pl.umap(adata, color = 'annot',legend_loc = 'on data', legend_fontsize = 'xx-small', ncols =2)

annot.value_counts()

#type(annot)

# astype('category')leiden_1.4

0_progen 546

1 518

2_gran 491

3 472

4 464

5_mono 416

6 409

7_progen 405

8 312

9_progen 308

10_megakaryo 294

11 268

12_eryth 257

13_mast_baso 161

14_TC 152

15_BC 129

16_macro 115

17_dend 111

Name: count, dtype: int646 Run PAGA

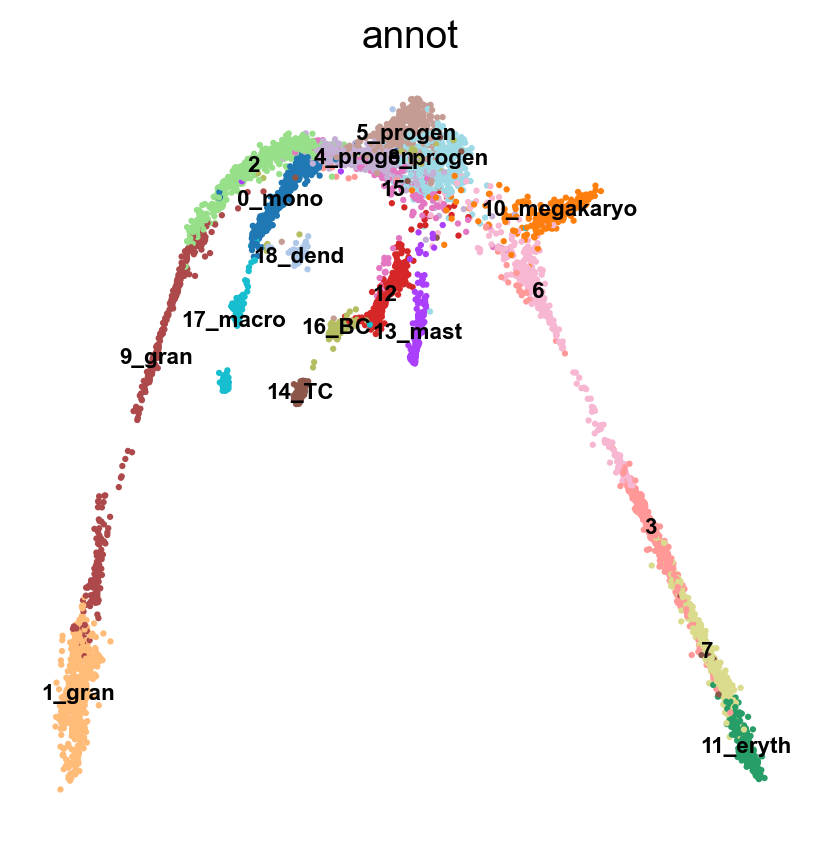

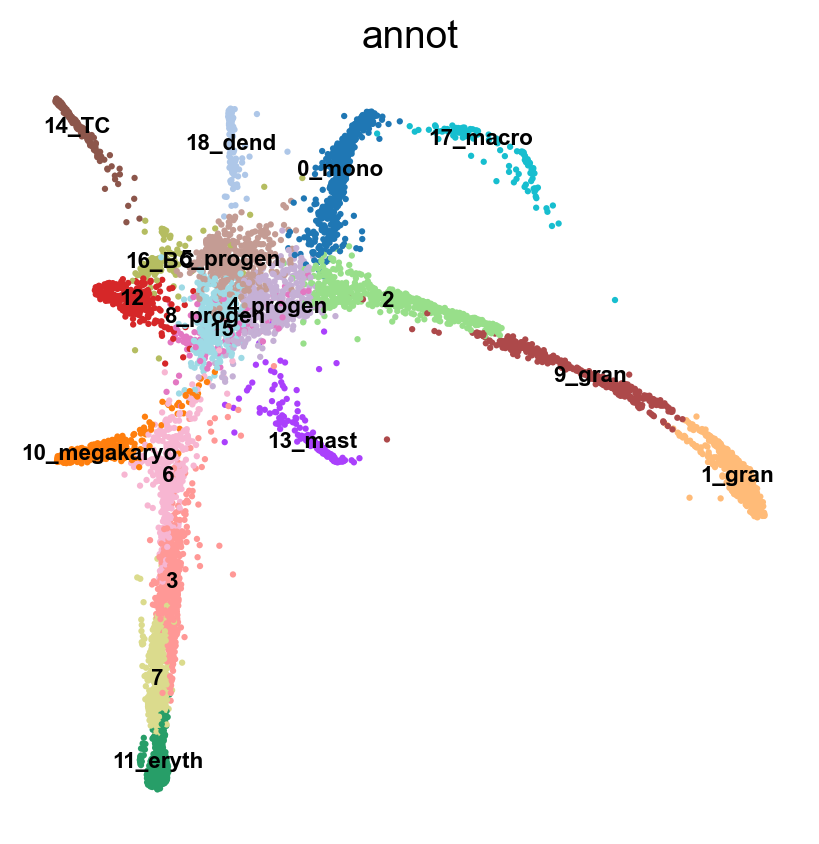

Use the clusters from leiden clustering with leiden_1.4 and run PAGA. PAGA is very good at revealing global topologies of the data that might be distorted in a UMAP. First we create the graph and initialize the positions using the UMAP.

# use the umap to initialize the graph layout.

sc.tl.draw_graph(adata, init_pos='X_umap')

sc.pl.draw_graph(adata, color='annot', legend_loc='on data', legend_fontsize = 'xx-small')

sc.tl.paga(adata, groups='annot')

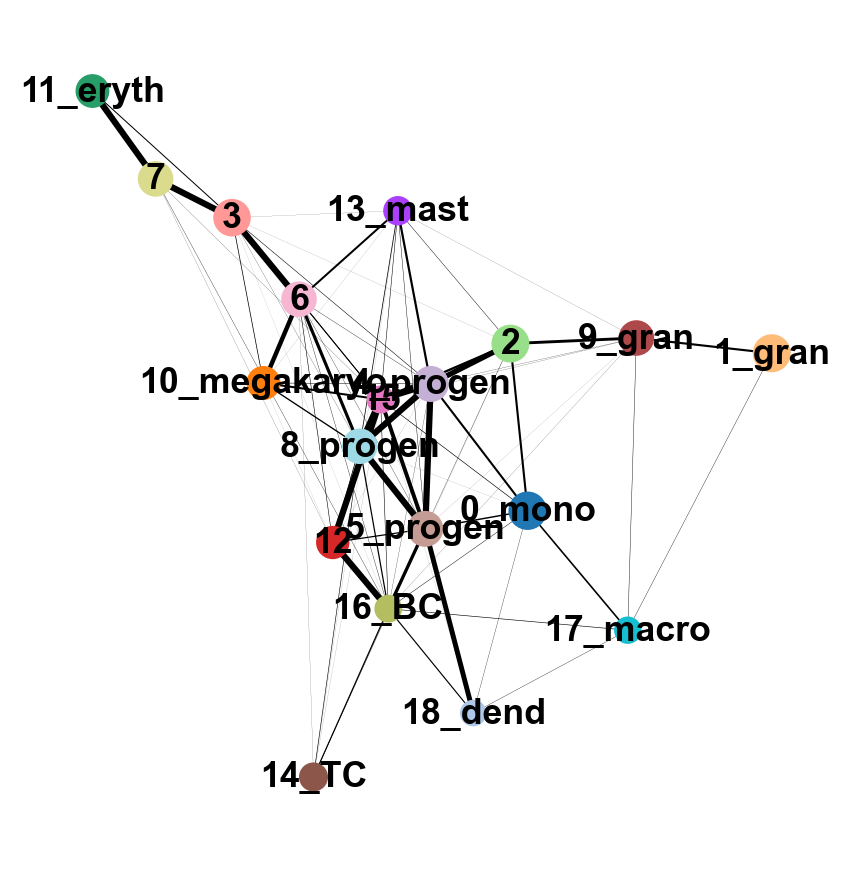

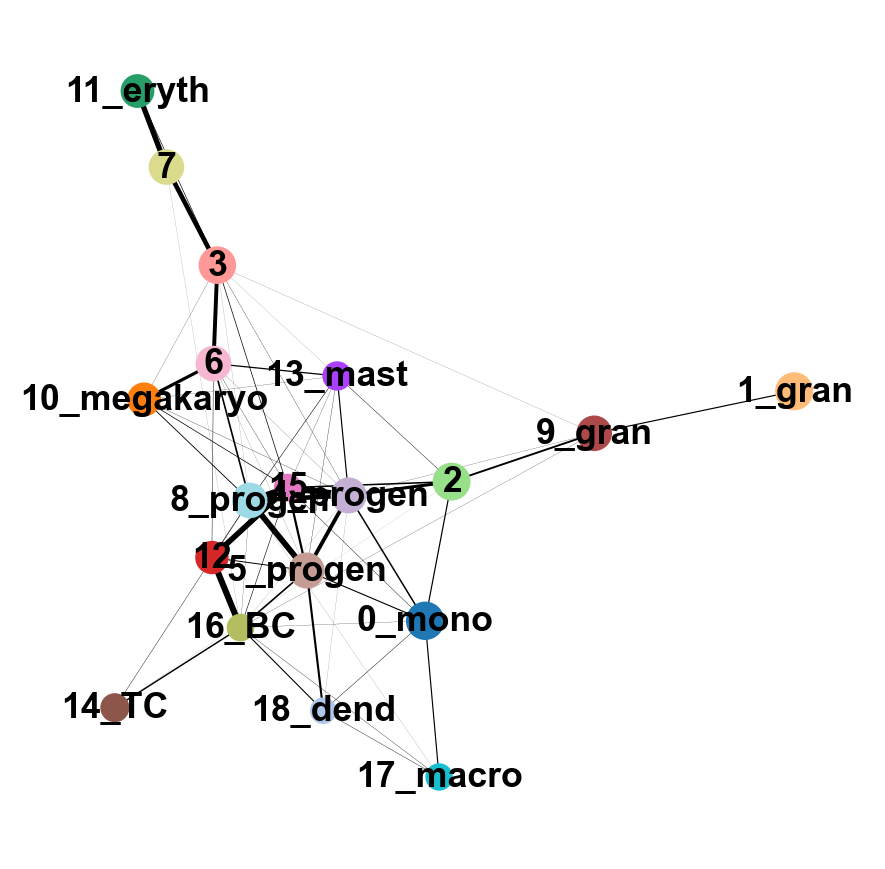

sc.pl.paga(adata, color='annot', edge_width_scale = 0.3)drawing single-cell graph using layout 'fa'

finished: added

'X_draw_graph_fa', graph_drawing coordinates (adata.obsm) (0:00:34)running PAGA

finished: added

'paga/connectivities', connectivities adjacency (adata.uns)

'paga/connectivities_tree', connectivities subtree (adata.uns) (0:00:00)

--> added 'pos', the PAGA positions (adata.uns['paga'])As you can see, we have edges between many clusters that we know are are unrelated, so we may need to clean up the data a bit more.

7 Filtering graph edges

First, lets explore the graph a bit. We plot the umap with the graph edges on top. The edges signify neighborhood between cells in the KNN-graph.

We have many edges in the graph between unrelated clusters, so lets try recalculating the graph with fewer neighbors. You can experiment with calculating different numbers of neighbors to see how it affects the graph and the connection between cells and nodes. Choosing too few neighbors however (<=5) will lead to problems with the downstream analysis for this dataset, as the graph becomes too sparse.

sc.pp.neighbors(adata, n_neighbors=8, use_rep = 'X_harmony_Phase', n_pcs = 30, random_state=159)

sc.pl.umap(adata, edges=True, color = 'annot', legend_loc= 'on data', legend_fontsize= 'xx-small')computing neighbors

finished: added to `.uns['neighbors']`

`.obsp['distances']`, distances for each pair of neighbors

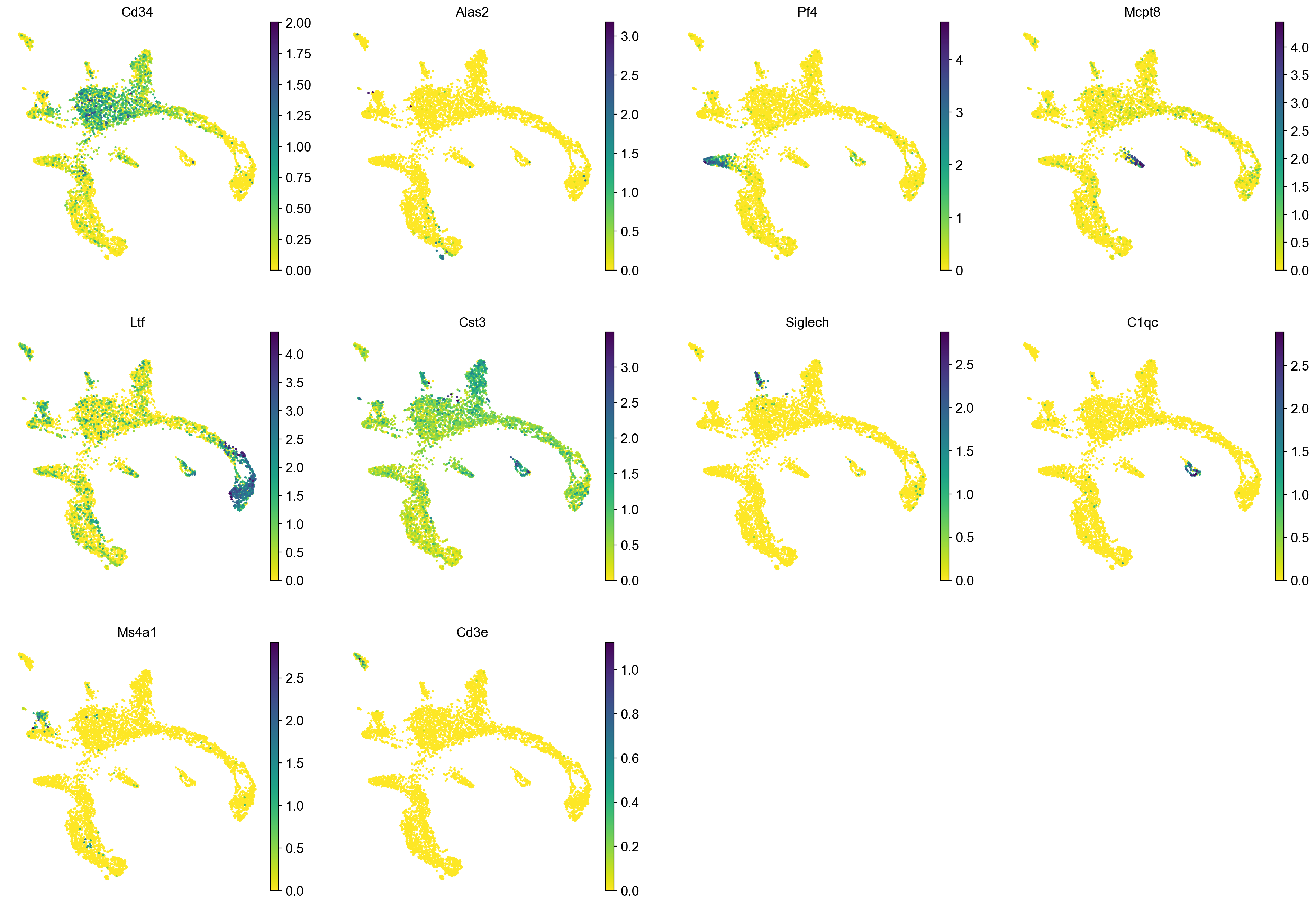

`.obsp['connectivities']`, weighted adjacency matrix (0:00:00)7.1 Rerun PAGA again on the data

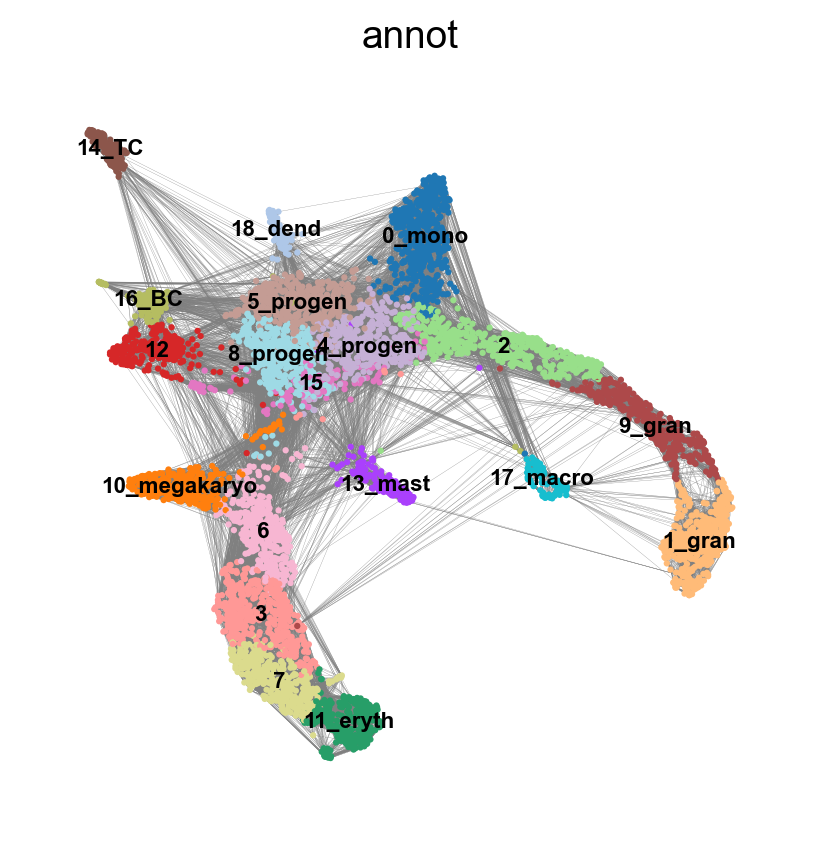

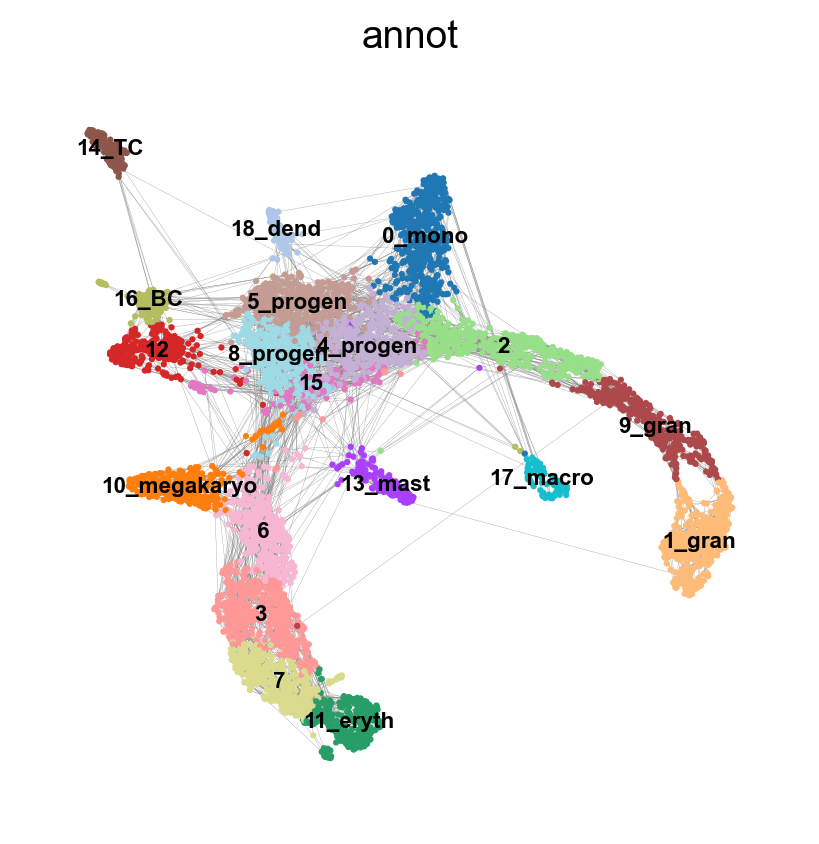

sc.tl.draw_graph(adata, init_pos='X_umap')

sc.pl.draw_graph(adata, color='annot', legend_loc='on data', legend_fontsize = 'xx-small')drawing single-cell graph using layout 'fa'

finished: added

'X_draw_graph_fa', graph_drawing coordinates (adata.obsm) (0:00:32)sc.tl.paga(adata, groups='annot')

sc.pl.paga(adata, color='annot', edge_width_scale = 0.3)running PAGA

finished: added

'paga/connectivities', connectivities adjacency (adata.uns)

'paga/connectivities_tree', connectivities subtree (adata.uns) (0:00:00)

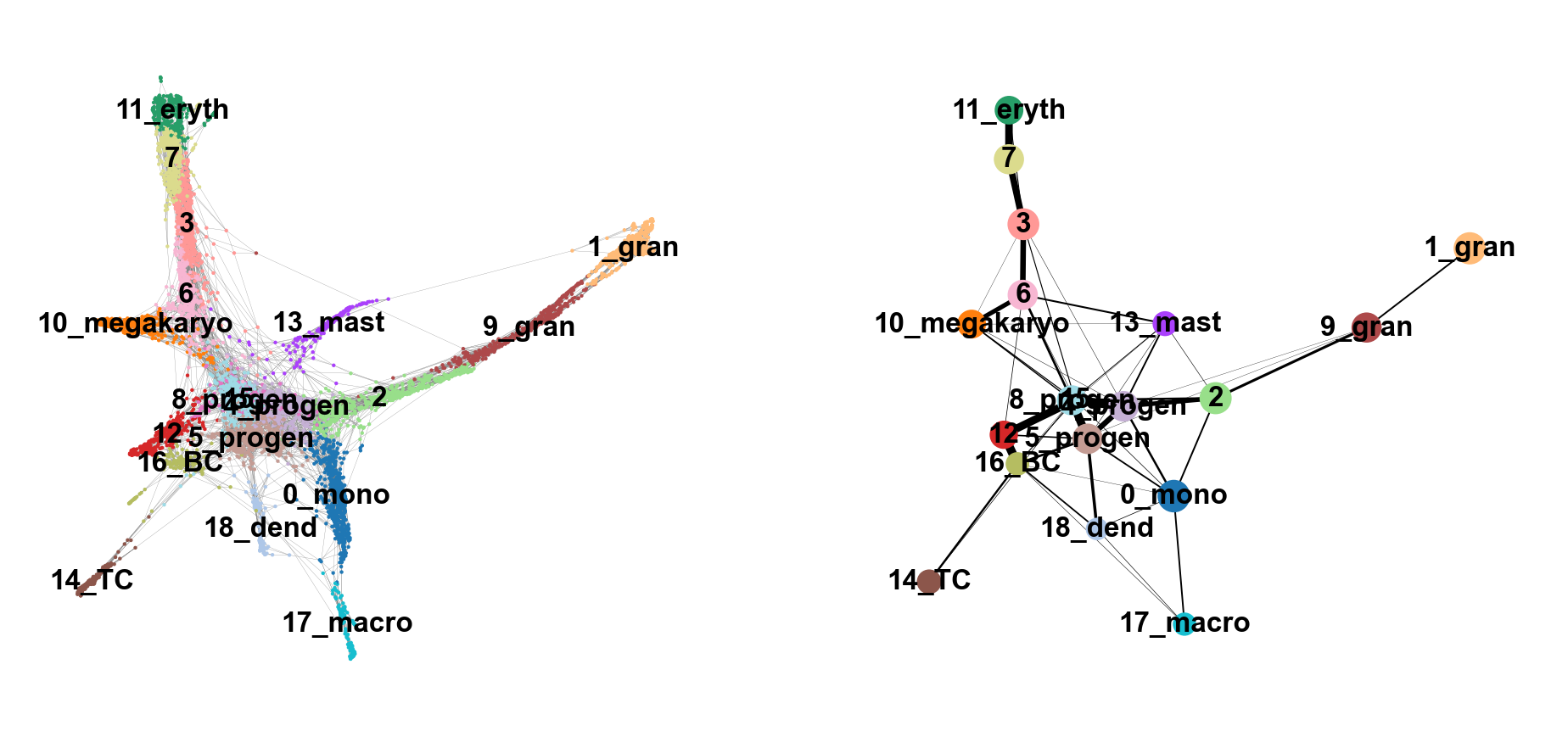

--> added 'pos', the PAGA positions (adata.uns['paga'])8 Embedding using PAGA-initialization

We can now redraw the graph using another starting position from the paga layout. The following is just as well possible for a UMAP.

sc.tl.draw_graph(adata, init_pos='paga')

sc.pl.draw_graph(adata, color=['annot'], legend_loc='on data', legend_fontsize= 'xx-small')drawing single-cell graph using layout 'fa'

finished: added

'X_draw_graph_fa', graph_drawing coordinates (adata.obsm) (0:00:36)We can now compare the two graphs. On the left, each cell is embedded on our PAGA graph, whereas on the right, each cluster of cells is represented as a node in the graph and each edge represents the connectivity between two clusters.

sc.pl.paga_compare(

adata, threshold=0.03, title='', right_margin=0.2, size=10, edge_width_scale=0.5,

legend_fontsize=12, fontsize=12, frameon=False, edges=True)--> added 'pos', the PAGA positions (adata.uns['paga'])Does this graph fit the biological expectations given what you know of hematopoesis. Please have a look at the figure in Section 2 and compare to the paths you now have.

9 Detecting gene changes along trajectories

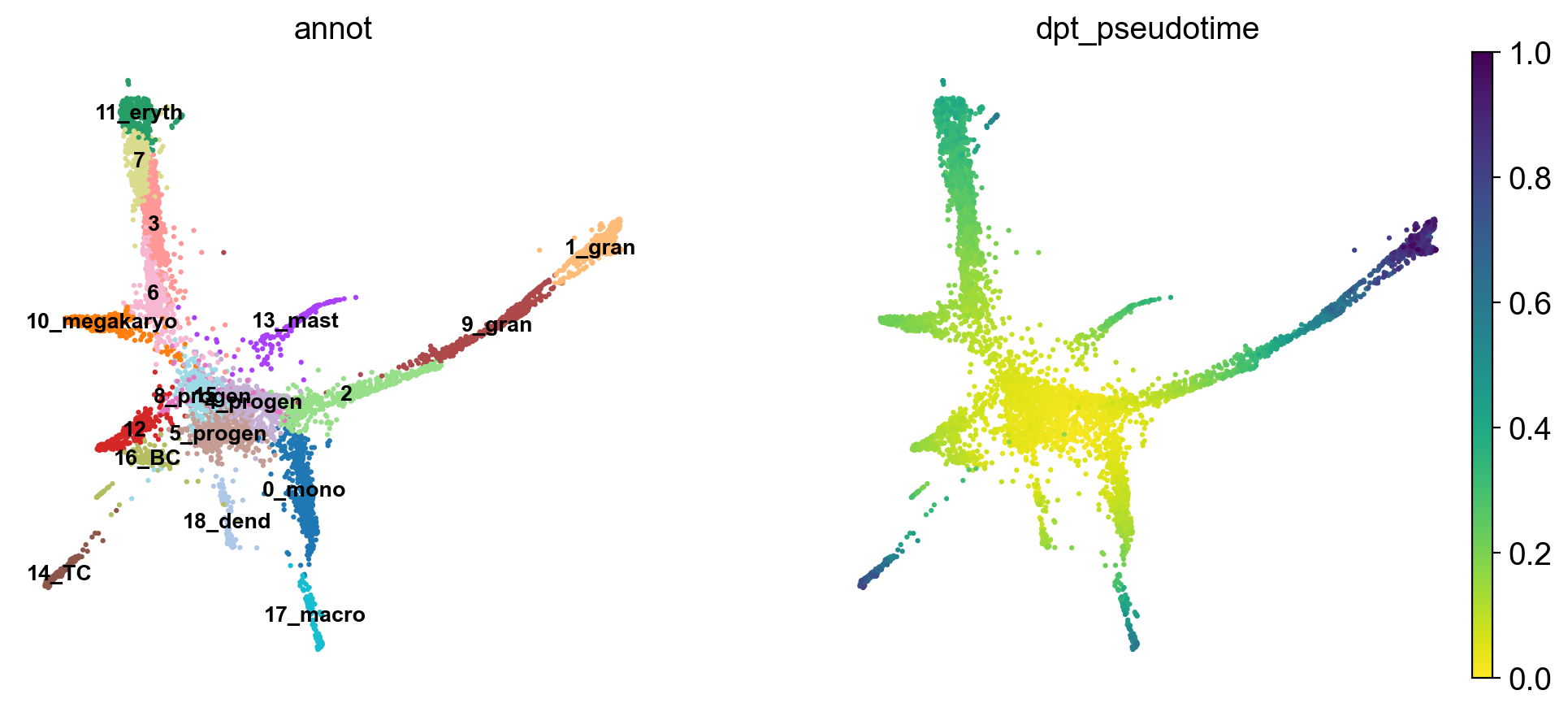

We can reconstruct gene changes along PAGA paths for a given set of genes. For this we calculate the diffusion pseudotime, which orders cells on a pseudotemporal axis based on their expression profile.

Choose a root cell for diffusion pseudotime. We have 3 progenitor clusters, but cluster 2 seems the most clear based on the Cd34 Marker, so we chose that cluster as starting timepoint for our pseudotime.

adata.uns['iroot'] = np.flatnonzero(adata.obs['annot'] == '0_progen')[0]

sc.tl.dpt(adata)WARNING: Trying to run `tl.dpt` without prior call of `tl.diffmap`. Falling back to `tl.diffmap` with default parameters.

computing Diffusion Maps using n_comps=15(=n_dcs)

computing transitions

finished (0:00:00)

eigenvalues of transition matrix

[1. 0.99812466 0.9953231 0.99262905 0.9893628 0.98886466

0.9860919 0.98588663 0.98264486 0.97911924 0.9788514 0.9765192

0.9742489 0.96989715 0.9587746 ]

finished: added

'X_diffmap', diffmap coordinates (adata.obsm)

'diffmap_evals', eigenvalues of transition matrix (adata.uns) (0:00:00)

computing Diffusion Pseudotime using n_dcs=10

finished: added

'dpt_pseudotime', the pseudotime (adata.obs) (0:00:00)Use the full raw data for visualization.

sc.pl.draw_graph(adata, color=['annot', 'dpt_pseudotime'], legend_loc='on data', legend_fontsize= 'x-small')The pseudotime represents the distance of every cell to the starting cluster. Have a look at the pseudotime plot, how well do you think it represents actual developmental time? What does it represent?

10 Using Cellrank to investigate gene expression changes along specific trajectories

Now that we have calculated pseudotime, we are interested in what genes might change during a certain lineages differentiation trajectory. To analyse this, we use Cellrank - a Python library that computes probablistic cell fate mappings. It basically models the probabilities for each cell to end up in a specific terminal state (in our case, fully differentiated cell types).

Cellrank can use different metrics to calculate a transition matrix, such as RNA velocity or pseudotime. As we have calculated pseudotime for our dataset above, we will use that for the calculation of our transition matrix.

#Set up a cellrank kernel

pt_kernel = cr.kernels.PseudotimeKernel(adata, time_key='dpt_pseudotime')

pt_kernel.compute_transition_matrix()Computing transition matrix based on pseudotime Finish (0:00:01)PseudotimeKernel[n=5828, dnorm=False, scheme='hard', frac_to_keep=0.3]In order to calculate terminal fates and lineage commitment, cellrank uses estimators like GPCCA. We set up an estimator from our PseudotimeKernel (pt_kernel) and compute macrostates in our dataset (groups of cells with similar transition dynamics).

#Set up an estimator to analyse the kernel

pt_est = cr.estimators.GPCCA(pt_kernel)

#Compute macrostates

pt_est.fit(cluster_key='annot', n_states=[10,15], n_cells=50)Computing Schur decomposition

Adding `adata.uns['eigendecomposition_fwd']`

`.schur_vectors`

`.schur_matrix`

`.eigendecomposition`

Finish (0:00:00)

Calculating minChi criterion in interval `[10, 15]`

Computing `10` macrostates

Adding `.macrostates`

`.macrostates_memberships`

`.coarse_T`

`.coarse_initial_distribution

`.coarse_stationary_distribution`

`.schur_vectors`

`.schur_matrix`

`.eigendecomposition`

Finish (0:00:08)GPCCA[kernel=PseudotimeKernel[n=5828], initial_states=None, terminal_states=None]Now that we have created an estimator and computed macrostates, we can either automatically compute our initial and terminal cell states using , or we can set them manually. In this case, we will set the initial state manually to 0_progen, but let cellrank predict the terminal states.

#Predict initial and terminal states

pt_est.set_initial_states({'0_progen': adata.obs_names[adata.obs['annot'] == '0_progen']},

cluster_key='annot', n_cells=30)

pt_est.predict_terminal_states(n_states=10, method='top_n', allow_overlap=True)Adding `adata.obs['init_states_fwd']`

`adata.obs['init_states_fwd_probs']`

`.initial_states`

`.initial_states_probabilities`

`.initial_states_memberships

Finish`

Adding `adata.obs['term_states_fwd']`

`adata.obs['term_states_fwd_probs']`

`.terminal_states`

`.terminal_states_probabilities`

`.terminal_states_memberships

Finish`GPCCA[kernel=PseudotimeKernel[n=5828], initial_states=['0_progen'], terminal_states=[np.str_('10_megakaryo'), np.str_('11'), np.str_('12_eryth'), np.str_('13_mast_baso'), np.str_('14_TC'), np.str_('15_BC'), np.str_('17_dend'), np.str_('2_gran'), np.str_('3'), np.str_('5_mono')]]Let’s plot the initial and terminal states on our single cell graph to see how well they correspond to what we would expect.

# Create figure with 1 row, 2 columns

fig, (ax1, ax2) = plt.subplots(1, 2, figsize=(15, 6))

# First plot: macrostates (left subplot)

scv.pl.scatter(

adata,

color='init_states_fwd',

basis='X_draw_graph_fa',

legend_loc='right',

frameon=False,

size=10,

linewidth=0,

ax=ax1, # Pass the axis

show=False # Don't show yet

)

# Second plot: terminal states (right subplot)

scv.pl.scatter(

adata,

color='term_states_fwd',

basis='X_draw_graph_fa',

legend_loc='right',

frameon=False,

size=10,

linewidth=0,

ax=ax2, # Pass the axis

show=False # Don't show yet

)

# Add titles

ax1.set_title('Macrostates (Forward)')

ax2.set_title('Terminal States (Forward)')

plt.tight_layout()

plt.show()Are the initial and terminal states predicted as you expected? What deviates from your expectation?

The results are influenced by a multitude of parameters, such as the PAGA graph connections (mediated by the n_neighbors parameter in sc.pp.neighbors) and the n_states parameter in pt_est.fit. Feel free to play with these parameters and see how the results change.

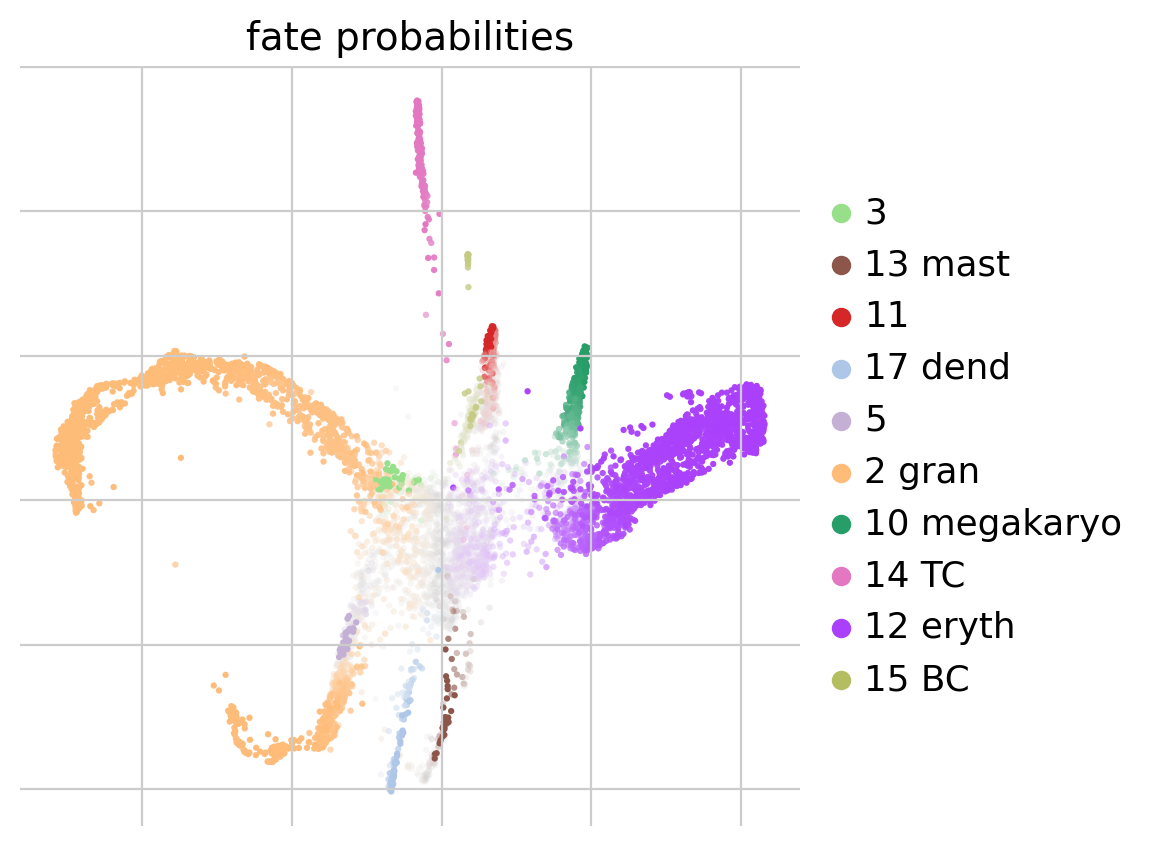

Now that we have calculated our initial and terminal states, we will calculate fate probabilities. Cellrank does this by using Markov Chains, which calculates the probability for each cell to end up at a given terminal state. Later on we will be able to use this information to investigate which genes drive differentiation from a progenitor towards a certain fully differentiated cell type.

#Compute and plot cell fate probabilities

pt_est.compute_fate_probabilities(use_petsc=False, solver="direct")

pt_est.plot_fate_probabilities(legend_loc='right', basis='X_draw_graph_fa')Computing fate probabilities

Adding `adata.obsm['lineages_fwd']`

`.fate_probabilities`

Finish (0:00:01)Now that we have computed the fate probabilities of every cell, we can compute driver genes - Cellrank does this by correlating fate probabilities with gene expression, under the assumption that genes that have a strong correlation with the fate probabilities of a certain lineage might drive differentiatin processes towards the lineages terminal state.

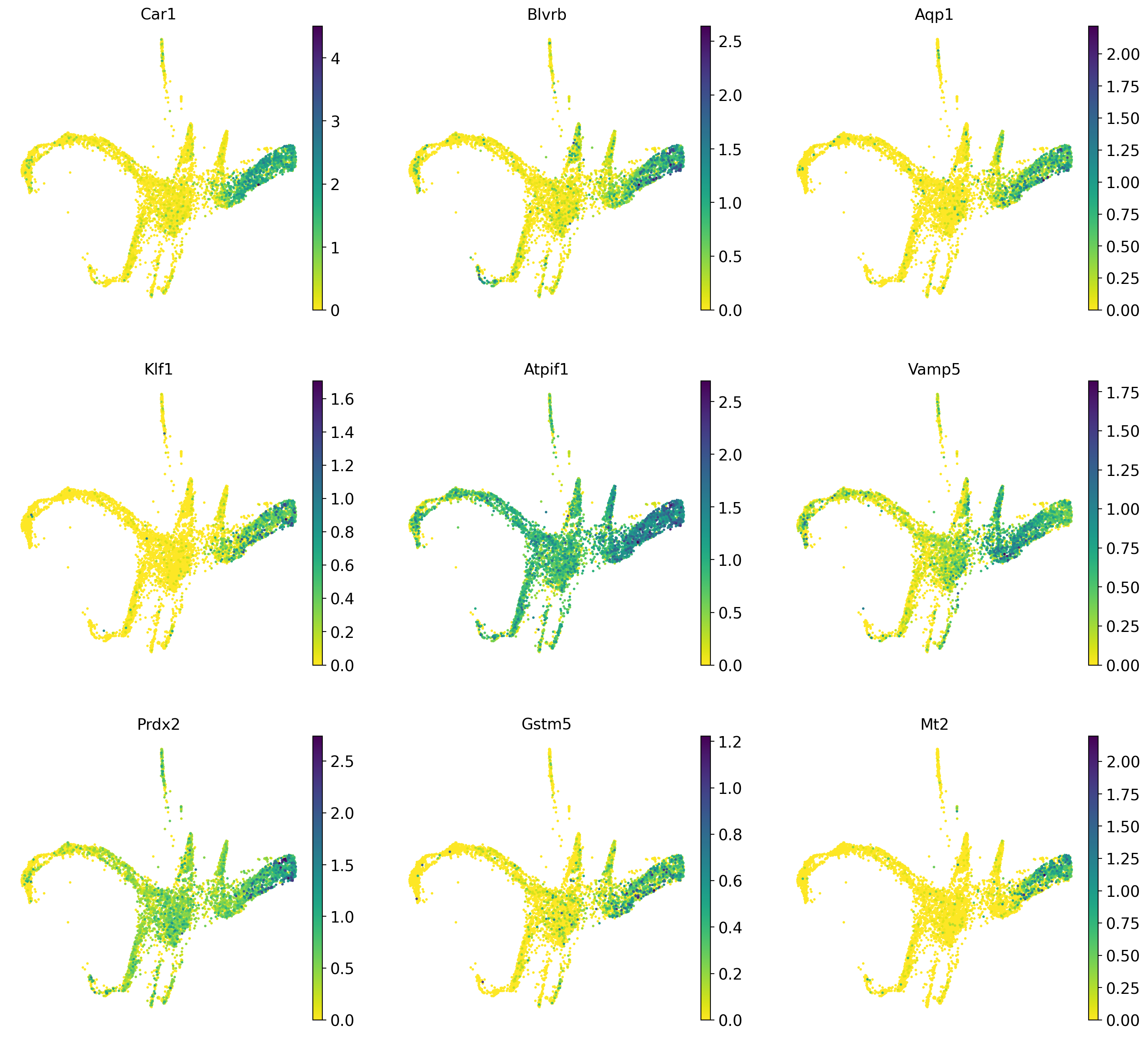

Below we will calculate driver genes for the erythrocyte lineage (12_eryth) and the megakaryocyte lineage (10_megakaryo).

#Calculate lineage drivers

drivers_ery = pt_est.compute_lineage_drivers(lineages=['12_eryth'], cluster_key='annot')

#Sort

drivers_ery_sort = drivers_ery.sort_values(by='12_eryth_corr', ascending=False)

drivers_mega = pt_est.compute_lineage_drivers(lineages=['10_megakaryo'], cluster_key='annot')

drivers_mega_sort = drivers_mega.sort_values(by='10_megakaryo_corr', ascending=False)

#Get top 10 eruythrocyte driver genes

drivers_ery_sort.head(n=10)Adding `adata.varm['terminal_lineage_drivers']`

`.lineage_drivers`

Finish (0:00:00)

Adding `adata.varm['terminal_lineage_drivers']`

`.lineage_drivers`

Finish (0:00:00)| 12_eryth_corr | 12_eryth_pval | 12_eryth_qval | 12_eryth_ci_low | 12_eryth_ci_high | |

|---|---|---|---|---|---|

| Car2 | 0.708006 | 0.0 | 0.0 | 0.694964 | 0.720582 |

| Car1 | 0.696015 | 0.0 | 0.0 | 0.682537 | 0.709020 |

| Blvrb | 0.576591 | 0.0 | 0.0 | 0.559195 | 0.593480 |

| Aqp1 | 0.567340 | 0.0 | 0.0 | 0.549672 | 0.584500 |

| Klf1 | 0.558625 | 0.0 | 0.0 | 0.540706 | 0.576038 |

| Atpif1 | 0.537567 | 0.0 | 0.0 | 0.519057 | 0.555574 |

| Vamp5 | 0.497348 | 0.0 | 0.0 | 0.477774 | 0.516428 |

| Prdx2 | 0.496092 | 0.0 | 0.0 | 0.476486 | 0.515204 |

| Gstm5 | 0.490391 | 0.0 | 0.0 | 0.470643 | 0.509649 |

| Mt2 | 0.490169 | 0.0 | 0.0 | 0.470415 | 0.509433 |

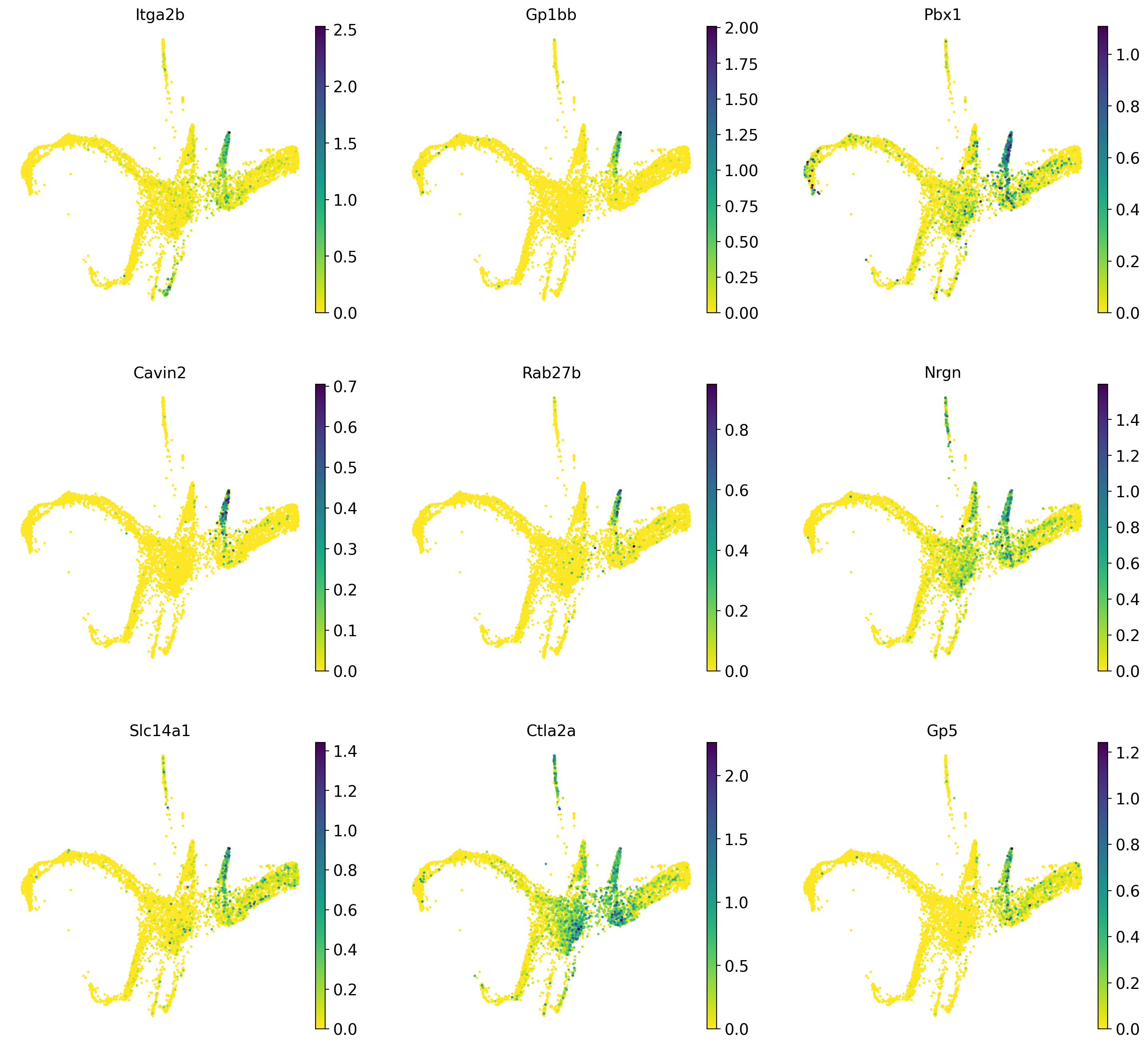

Now we plot the top 10 previously calculated driver genes for the erythrocyte and megakaryocyte lineages.

sc.pl.draw_graph(adata, color=drivers_ery_sort.index.tolist()[1:10], legend_loc='on data', legend_fontsize= 'x-small', use_raw=False, ncols=3)#Also plot the lineage drivers for the granulocyte lineage

sc.pl.draw_graph(adata, color=drivers_mega_sort.index.tolist()[1:10], legend_loc='on data', legend_fontsize= 'x-small', use_raw=False, ncols=3)Discuss amongst yourselves which genes appear to be good markers for the respective lineages and why!

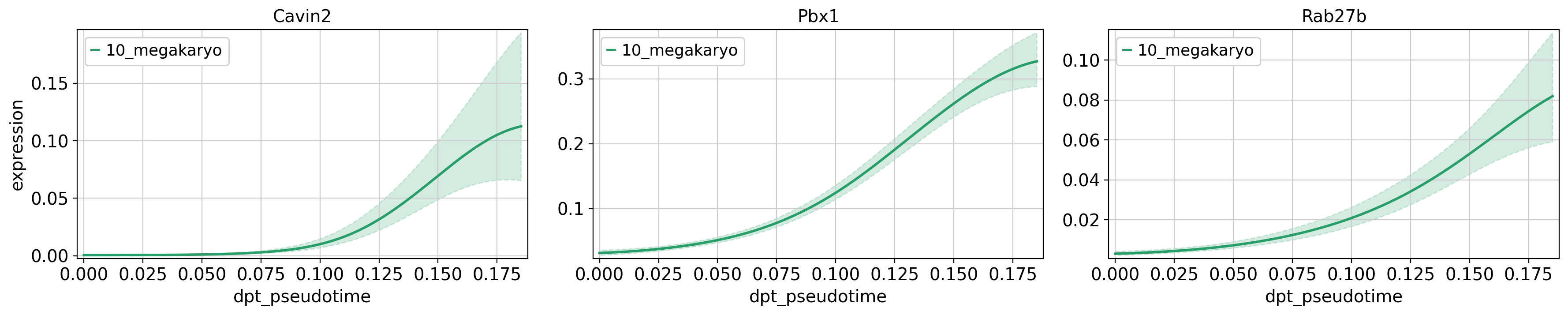

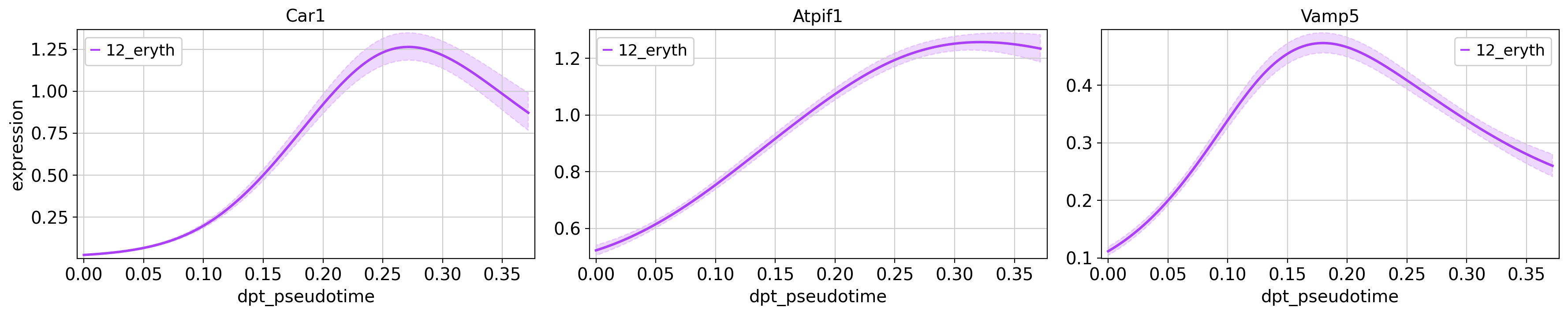

Now we want to look at the expression trends for some of the genes we just identified as potential drivers over pseudotime. Cellrank uses Generalized Additive Models (GAMs) to fit smooth expression curves, so we start by creating such models for our data.

#Fit GAMs

model = cr.models.GAM(adata, n_knots=6)Next, we plot some of the interesting genes of the erythrocyte (Car1, Atpif1, Vamp5) and megakaryocyte (Cavin2, Pbx1, Ctla2a) lineages. We only plot the genes for their respective lineage, in order to compare their expression at different pseudotime points during the lineages developmental trajectory.

cr.pl.gene_trends(adata,

model = model,

genes = ['Car1', 'Atpif1', 'Vamp5'],

same_plot=True,

time_key = 'dpt_pseudotime',

lineages = ['12_eryth'],

hide_cells=True,

ncols=3,

n_jobs=1,

backend='threading')

cr.pl.gene_trends(adata,

model = model,

genes = ['Cavin2', 'Pbx1', 'Rab27b'],

same_plot=True,

time_key = 'dpt_pseudotime',

lineages = ['10_megakaryo'],

hide_cells=True,

ncols=3,

n_jobs=1,

backend='threading')Computing trends using `1` core(s) Finish (0:00:00)

Plotting trendsComputing trends using `1` core(s) Finish (0:00:00)

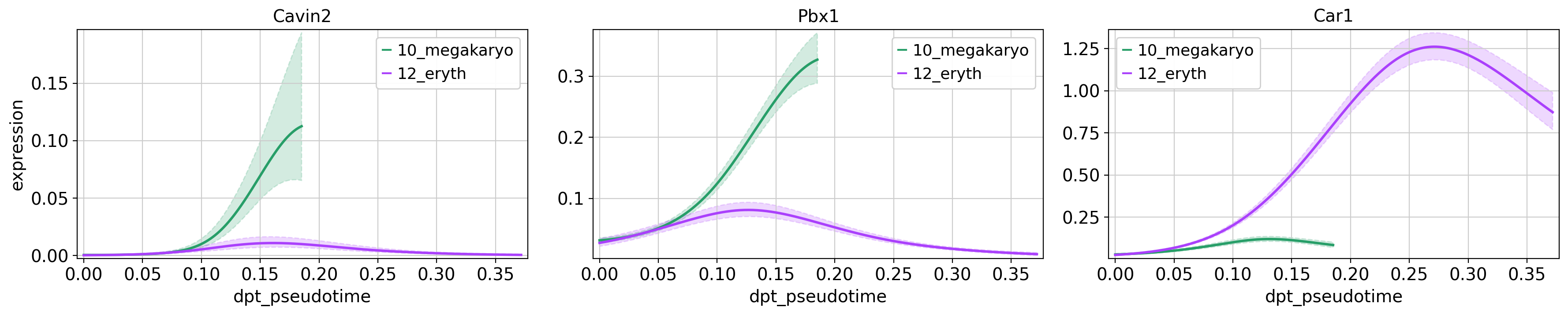

Plotting trendsWhat would this look like if we were to try and compare the gene expression between the two lineages?

#Plot gene trends for erythrocyte and megakaryo lineages

cr.pl.gene_trends(adata,

model = model,

genes = ['Cavin2', 'Pbx1', 'Car1'],

same_plot=True,

time_key = 'dpt_pseudotime',

lineages = ['10_megakaryo', '12_eryth'],

hide_cells=True,

ncols=3,

n_jobs=1,

backend='threading')Computing trends using `1` core(s) Finish (0:00:00)

Plotting trendsWhy do the different lineages in the plots above have different maximum pseudotime values? In how far do you think the gene expression values are comparable across the two lineages?

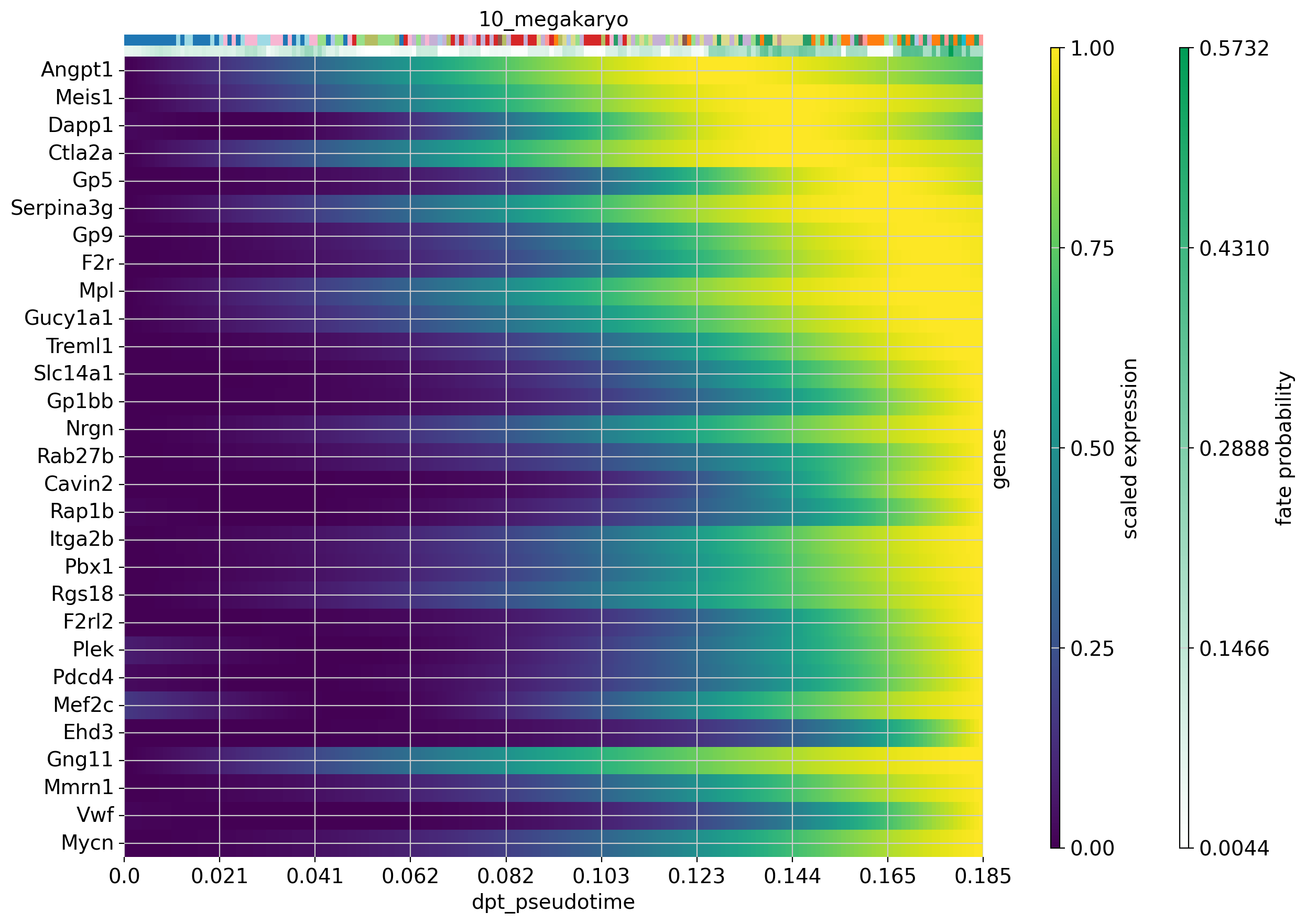

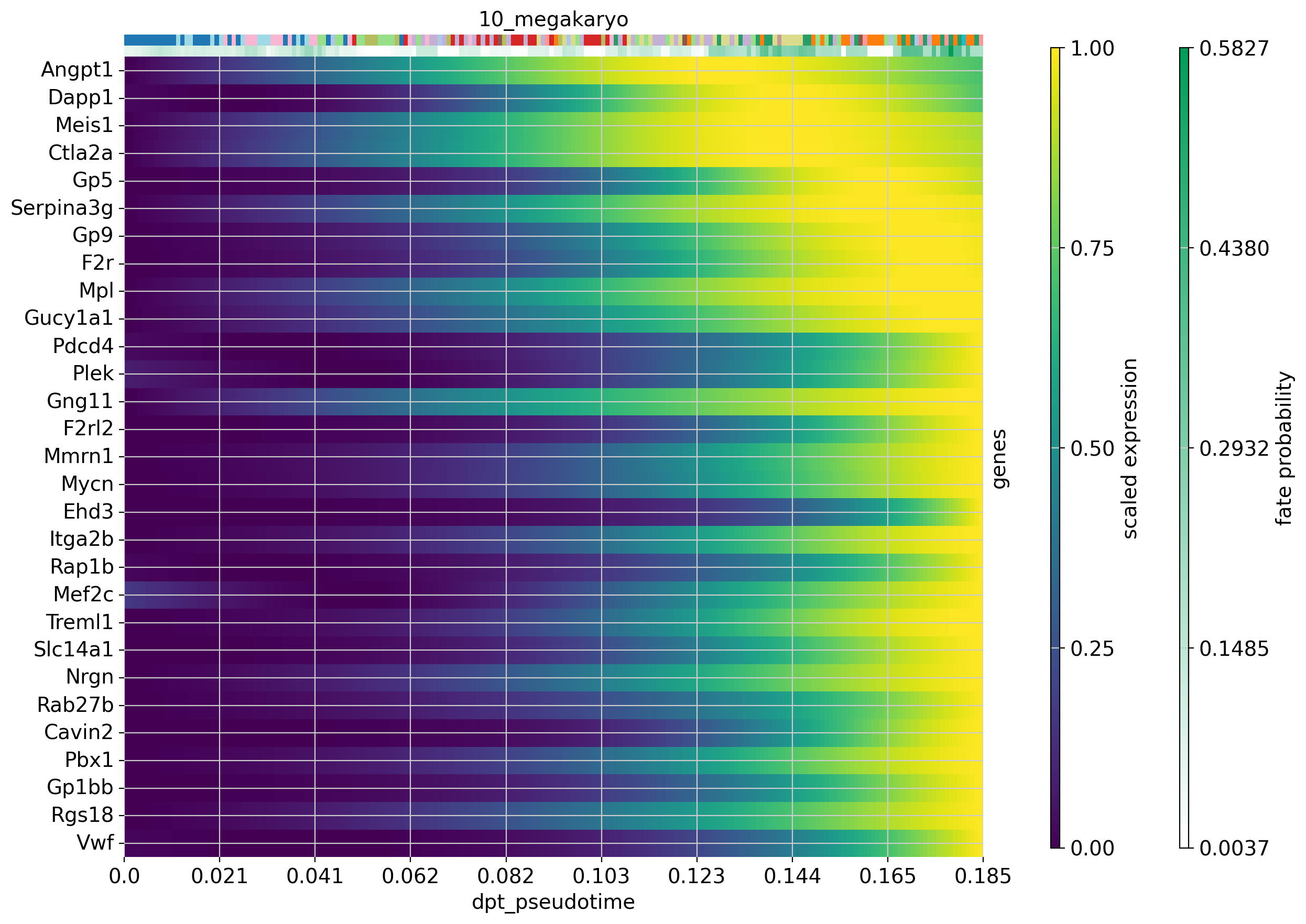

We can also visualize the expression of several genes over pseudotime in a single trajectory. Let’s do this for the 30 top driver genes of the erythrocyte and megakaryocyte lineages. If you have any genes that you are interested in, plot these using the code below.

#Plot expression cascades of several genes over pseudotime

cr.pl.heatmap(

adata,

model=model,

lineages='12_eryth',

cluster_key='annot',

show_fate_probabilities=True,

genes=drivers_ery_sort.index.tolist()[1:30],

time_key='dpt_pseudotime',

figsize=(12,10),

show_all_genes=True

)

cr.pl.heatmap(

adata,

model=model,

lineages='10_megakaryo',

cluster_key='annot',

show_fate_probabilities=True,

genes=drivers_mega_sort.index.tolist()[1:30],

time_key='dpt_pseudotime',

figsize=(12,10),

show_all_genes=True

)Computing trends using `1` core(s) Finish (0:00:01)Computing trends using `1` core(s) Finish (0:00:00)Now that we have visualized expression trends for several genes associated with the our lineages, we could be interested in identifying other genes that follow a specific expression pattern along the trajectory. Let’s say we are interested in genes from the erythrocyte lineage that increase with pseudotime (like Car1) and genes whose expression peaks and then falls off again before the maximum pseudotime value is reached (like Vamp5).

Cellrank provides a way for us to identify genes that have a similar expression along the trajectory, using the cr.pl.cluster_trends function. You can modify the number of clusters by modifying the resolution parameter until you find the clustering to be appropriate.

#Then we cluster trends based on the erythrocyte driver genes

cr.pl.cluster_trends(

adata,

model=model, # use the model from before

lineage="12_eryth",

genes=drivers_ery_sort.index.tolist(),

time_key="dpt_pseudotime",

n_jobs=8,

random_state=0,

clustering_kwargs={"resolution": 0.4, "random_state": 0},

neighbors_kwargs={"random_state": 0},

recompute=True

)Computing trends using `8` core(s) Finish (0:01:30)

WARNING: Unable to fit `5` models.

computing PCA

with n_comps=49

finished (0:00:00)

computing neighbors

using 'X_pca' with n_pcs = 49

finished: added to `.uns['neighbors']`

`.obsp['distances']`, distances for each pair of neighbors

`.obsp['connectivities']`, weighted adjacency matrix (0:00:00)

running Leiden clustering

finished: found 14 clusters and added

'clusters', the cluster labels (adata.obs, categorical) (0:00:00)

Saving data to `adata.uns['lineage_12_eryth_trend']`The results of the clustering trend are saved as an anndata object in adata.uns['lineage_12_eryth_trend']. We will now use this to extract genes that follow a specific trend and plot them.

#Save trends as new anndata object

ery_trends = adata.uns['lineage_12_eryth_trend'].copy()

#Add means and dispersions from original data to the object

cols = ["means", "dispersions"]

ery_trends.obs = ery_trends.obs.merge(

right=adata.var[cols], how="left", left_index=True, right_index=True

)

#Extract genes that follow the expression patterns of the respective clusters

low_genes = list(

ery_trends[ery_trends.obs["clusters"] == "4"]

.obs.sort_values("means", ascending=False)

.index

)

mid_genes = list(

ery_trends[ery_trends.obs["clusters"] == "6"]

.obs.sort_values("means", ascending=False)

.index

)

high_genes = list(

ery_trends[ery_trends.obs["clusters"] == "1"]

.obs.sort_values("means", ascending=False)

.index

)#There are a lot of ribosomal genes in our gene lists - these are probably not as interesting as other genes, so we remove them from our lists right now

low_genes_filt = [str(x) for x in low_genes if not x.lower().startswith(('rpl', 'rps'))]

mid_genes_filt = [str(x) for x in mid_genes if not x.lower().startswith(('rpl', 'rps'))]

high_genes_filt = [str(x) for x in high_genes if not x.lower().startswith(('rpl', 'rps'))]#Plot the selected genes

cr.pl.gene_trends(

adata,

model=model,

lineages="12_eryth",

cell_color="annot",

genes=high_genes_filt[0:3]+mid_genes_filt[0:3]+low_genes_filt[0:3],

same_plot=True,

ncols=3,

time_key="dpt_pseudotime",

hide_cells=True

)Computing trends using `1` core(s) Finish (0:00:00)Plotting trendsYou can visaulize any number of genes in this manner in order to see which ones are interesting for your specific question.

As you can see, we can manipulate the trajectory quite a bit by selecting different number of neighbors, components etc. to fit with our assumptions on the development of these celltypes.

Please explore further how you can tweak the trajectory. For instance, can you create a PAGA trajectory using the orignial UMAP from Seurat instead? Hint, you first need to compute the neighbors on the UMAP.

11 Session info

Click here

sc.logging.print_versions()Dependencies

| Dependency | Version |

|---|---|

| fast-array-utils | 1.3.1 |

| jupyter_core | 5.9.1 |

| pytz | 2025.2 |

| platformdirs | 4.9.2 |

| psutil | 7.2.2 |

| ipython | 9.10.0 |

| tqdm | 4.67.3 |

| fa2_modified | 0.4 |

| llvmlite | 0.46.0 |

| pyzmq | 27.1.0 |

| scikit-learn | 1.6.1 |

| traitlets | 5.14.3 |

| ipykernel | 7.2.0 |

| joblib | 1.5.3 |

| pillow | 12.1.1 |

| typing_extensions | 4.15.0 |

| pygpcca | 1.0.4 |

| patsy | 1.0.2 |

| six | 1.17.0 |

| numba | 0.63.1 |

| PyYAML | 6.0.3 |

| pynndescent | 0.5.13 |

| numcodecs | 0.16.5 |

| debugpy | 1.8.20 |

| prompt_toolkit | 3.0.52 |

| seaborn | 0.13.2 |

| tornado | 6.5.3 |

| pyparsing | 3.3.2 |

| google-crc32c | 1.8.0 |

| docrep | 0.3.2 |

| matplotlib-inline | 0.2.1 |

| kiwisolver | 1.4.9 |

| progressbar2 | 4.5.0 |

| h5py | 3.15.1 |

| Pygments | 2.19.2 |

| wcwidth | 0.6.0 |

| igraph | 1.0.0 |

| jupyter_client | 8.8.0 |

| python-dateutil | 2.9.0.post0 |

| msgpack | 1.1.2 |

| sparse | 0.18.0 |

| pure_eval | 0.2.3 |

| xarray | 2026.2.0 |

| fsspec | 2026.2.0 |

| texttable | 1.7.0 |

| comm | 0.2.3 |

| setuptools | 82.0.0 |

| executing | 2.2.1 |

| session-info2 | 0.4 |

| packaging | 26.0 |

| pygam | 0.12.0 |

| charset-normalizer | 3.4.4 |

| umap-learn | 0.5.11 |

| threadpoolctl | 3.6.0 |

| donfig | 0.8.1.post1 |

| natsort | 8.4.0 |

| python-utils | 3.9.1 |

| statsmodels | 0.14.6 |

| slepc4py | 3.24.2 |

| legacy-api-wrap | 1.5 |

| decorator | 5.2.1 |

| cycler | 0.12.1 |

| leidenalg | 0.11.0 |

| jedi | 0.19.2 |

| backports.zstd | 1.3.0 |

| zarr | 3.1.5 |

| wrapt | 2.1.1 |

| stack_data | 0.6.3 |

| networkx | 3.6.1 |

| parso | 0.8.6 |

| asttokens | 3.0.1 |

Copyable Markdown

| Package | Version | | ---------- | ------- | | petsc4py | 3.24.4 | | numpy | 2.3.5 | | pandas | 2.3.3 | | matplotlib | 3.10.8 | | scanpy | 1.12 | | scipy | 1.16.3 | | cellrank | 2.0.7 | | scvelo | 0.3.4 | | anndata | 0.12.10 | | Dependency | Version | | ------------------ | ----------- | | fast-array-utils | 1.3.1 | | jupyter_core | 5.9.1 | | pytz | 2025.2 | | platformdirs | 4.9.2 | | psutil | 7.2.2 | | ipython | 9.10.0 | | tqdm | 4.67.3 | | fa2_modified | 0.4 | | llvmlite | 0.46.0 | | pyzmq | 27.1.0 | | scikit-learn | 1.6.1 | | traitlets | 5.14.3 | | ipykernel | 7.2.0 | | joblib | 1.5.3 | | pillow | 12.1.1 | | typing_extensions | 4.15.0 | | pygpcca | 1.0.4 | | patsy | 1.0.2 | | six | 1.17.0 | | numba | 0.63.1 | | PyYAML | 6.0.3 | | pynndescent | 0.5.13 | | numcodecs | 0.16.5 | | debugpy | 1.8.20 | | prompt_toolkit | 3.0.52 | | seaborn | 0.13.2 | | tornado | 6.5.3 | | pyparsing | 3.3.2 | | google-crc32c | 1.8.0 | | docrep | 0.3.2 | | matplotlib-inline | 0.2.1 | | kiwisolver | 1.4.9 | | progressbar2 | 4.5.0 | | h5py | 3.15.1 | | Pygments | 2.19.2 | | wcwidth | 0.6.0 | | igraph | 1.0.0 | | jupyter_client | 8.8.0 | | python-dateutil | 2.9.0.post0 | | msgpack | 1.1.2 | | sparse | 0.18.0 | | pure_eval | 0.2.3 | | xarray | 2026.2.0 | | fsspec | 2026.2.0 | | texttable | 1.7.0 | | comm | 0.2.3 | | setuptools | 82.0.0 | | executing | 2.2.1 | | session-info2 | 0.4 | | packaging | 26.0 | | pygam | 0.12.0 | | charset-normalizer | 3.4.4 | | umap-learn | 0.5.11 | | threadpoolctl | 3.6.0 | | donfig | 0.8.1.post1 | | natsort | 8.4.0 | | python-utils | 3.9.1 | | statsmodels | 0.14.6 | | slepc4py | 3.24.2 | | legacy-api-wrap | 1.5 | | decorator | 5.2.1 | | cycler | 0.12.1 | | leidenalg | 0.11.0 | | jedi | 0.19.2 | | backports.zstd | 1.3.0 | | zarr | 3.1.5 | | wrapt | 2.1.1 | | stack_data | 0.6.3 | | networkx | 3.6.1 | | parso | 0.8.6 | | asttokens | 3.0.1 | | Component | Info | | --------- | ------------------------------------------------------------------------------ | | Python | 3.12.12 | packaged by conda-forge | (main, Jan 26 2026, 23:51:32) [GCC 14.3.0] | | OS | Linux-6.12.5-linuxkit-x86_64-with-glibc2.35 | | CPU | 16 logical CPU cores, x86_64 | | GPU | No GPU found | | Updated | 2026-04-17 10:30 |