my_cars <- mtcars[, c(1:4, 10)]

my_cars <- my_cars[my_cars$disp > mean(my_cars$disp), ]

my_cars <- colMeans(my_cars)Welcome to the hands-on workshop “Tidy Work in Tidyverse”. Most of the functions necessary to complete the tutorials and challenges were covered in the lecture. However, sometimes the tasks require that you check the docs or search online. Our solutions are not the only possible ones! Let us know if you can do better or solve things in a different way!

If stuck, look at hints, next do some google searches and, if still stuck, turn to a TA.

It is a lot of material, we know! Do not feel bad if you do not solve all the tasks. If you completed Challenge 3, you have used all the most important features of tidyverse! Good luck!

1 General exercises

Datasets are available here.

1.1 Pipes

1.1.1 Chunk 1

Rewrite the following code chunks as pipes (Load package magrittr because tidyverse supports only the %>% pipe!):

This is our solution:

Code

mtcars %>%

select(c(1:4, 10)) %>%

filter(disp > mean(disp)) %>%

colMeans() -> my_carsWhat is wrong with our solution?

- It is better to have the result assigned on the left hand side: result <- expression. In this case the expression is the whole pipe.

- Our ‘expression -> result’ is correct but can easily be missed when reading the code.

1.1.2 Chunk 2

The summary(x) function is a bit special: when you type summary(x) in the console, print is called in an implicit way. Pipe call does not do such implicite call, so you will have to invoke print in an explicit way. But the %T>% does unbranch for one call only, you will have to make printing of the summary a one single composed call using {}. Try to wrap your mind around this. If in doubt, turn to a TA.

summary(cars)

colSums(cars)Code

cars %T>% {print(summary(.))} %>% colSums()1.1.3 Chunk 3

Rewrite the following correlations using pipes.

cor(mtcars$gear, mtcars$mpg)Code

mtcars %$% cor(gear, mpg)cor(mtcars)Code

mtcars %>% cor()1.1.4 Chunk 4

Given is the dim_summary(nrows, ncols) function which takes nrows and ncols as arguments and prints this info:

dim_summary <- function(nrows, ncols) {

print(

paste0('Matrix M has: ', nrows, ' rows and ', ncols, ' columns.')

)

}Rewrite each of the code chunks below using pipes:

distr1 <- rnorm(16)

M <- matrix(distr1, ncol = 4)

plot(M)

M <- M + sample(M)

dim_summary(nrows = nrow(M), ncols = ncol(M))distr2 <- rnorm(16)

N <- matrix(distr2, ncol = 4)

colnames(N) <- (letters[1:4])

summary(N)

N <- N + 0P <- M %x% t(N)

heatmap(P)

colnames(P) <- letters[1:dim(P)[2]]

cor(P[ ,'a'], P[ ,'i'])Beware of a class of functions, called replacement functions. These beasts are of the form: function(arguments) <- value and rownames(x) <- c('a', 'b', 'c') is a good example of such beast. When writing pipes, we have bear in mind that whole function <- is the name of the replacement function and thus we have to use it as such in the pipe enquoted using backticks. Yes, we know… but you wont see this too often.

Sometimes, it may not be possible to put everything into one single pipe and the results of running two or more pipes have to be used in the final pipe.

Code

dim_summary <- function(nrows, ncols) {

print(paste0('Matrix M has: ', nrows, ' rows and ', ncols, ' columns.'))

}

M <- rnorm(16) %>%

matrix(ncol = 4) %T>%

plot() %>%

`+`(., sample(.)) %T>%

{dim_summary(nrow(.), ncol(.))}

N <- rnorm(16) %>%

matrix(ncol = 4) %>%

`colnames<-`(letters[1:4]) %T>%

summary() %>% `+`(., 0)

P <- M %>%

`%x%`(., t(N)) %T>%

heatmap() %>%

`colnames<-`(letters[1:dim(.)[2]]) %>%

as_data_frame() %$%

cor(a, i)1.2 Tibbles

1.2.1 Task 1

- Convert the

mtcarsdataset to a tibblevehicles. - Select the number of cylinders (

cyl) variable using:- the

[[index]]accessor, - the

[[string]]accessor, - the

$accessor.

- the

- Do the same selection as above, but using pipe and placeholders (use all three ways of accessing a variable).

- Print the tibble.

- Print the 30 first rows of the tibble.

- Change the default behavior of printing a tibble so that at least 15 and at most 30 rows are printed.

- What is the difference between the

tibble.print_maxanddplyr.print_min? Is there any? Test it. - Convert

vehiclesback to adata.framecalledautomobiles.

Code

# 1

vehicles <- mtcars %>% as_tibble()

# 2

vehicles[['cyl']]

vehicles[[2]]

vehicles$cyl

# 3

vehicles %T>%

{print(.[['cyl']])} %T>%

{print(.[[2]])} %>%

.$cyl

# 4

vehicles

# 5

vehicles %>% head(n = 30)

# 6

options(tibble.print_min = 15, tibble.print_max = 30)

# 7

# In theory there should be no difference. dplyr imports tibble from the tibble package

# and dplyr.width, dplyr.print_min and dplyr.print_min are passed down to the tibble.

# But test both behaviours. First with only the tibble package loaded, later with dplyr # loaded.

# 8

automobiles <- as.data.frame(vehicles)1.2.2 Task 2

Create the following tibble using tribble():

Code

tab <- tribble(

~id, ~event, ~date,

1, 'success', '24-04-2017',

2, 'failed', '25-04-2017',

3, 'failed', '25-04-2017',

4, 'success', '27-04-2017'

)1.2.3 Task 3

Compare the performance of as.data.frame(), as_data_frame() and as_tibble() on a 100 x 30 matrix filled with some random integers. Use package microbenchmark. Fill in your result here in the Tidyverse Lab sheet, Tibbles – performance.

Code

tst <- replicate(30, sample(100), simplify = TRUE)

colnames(tst) = paste0(rep('col', times = dim(tst)[2]), 1:dim(tst)[2])

microbenchmark::microbenchmark(

as.data.frame(tst),

as_data_frame(tst),

as_tibble(tst)

)1.2.4 Task 4

Do you think tibbles are lazy? Try to create a tibble that tests whether lazy evaluation applies to tibbles too.

Code

tibble(x = sample(1:10, size = 10, replace = T), y = log10(x))1.3 Parsing

Parse the following vectors using parse_ functions:

vec1 <- c(1, 7.2, 3.84, -5.23)– parse it asdouble(any problems? why?).- Now, parse the same vector

c(1, 7.2, 3.84, -5.23)asinteger. What happens? - Can you still parse it as

integersomehow? - Parse as double

vec2 <- c('2', '3,45', '?', '-7,28') - Parse correctly

vec3 <- c('2', '3,45', '?', '-7.28') - Parse the following guessing the parser:

vec4 <- c('barrel: 432.7$', 'liter: 15.42PLN', 'gallon costs approx 32.1SEK', 'sunny, wind gusts up till 55m/s') - Can you parse

vec4as number? Do it if you can. - Parse

vec5 <- "25 Dec 2015"as date (hint:?parse_date()). - Parse

10_Jul_1410as date.

Code

vec1 <- c(1, 7.2, 3.84, -5.23)

vec2 <- c('2', '3,45', '?', '-7,28')

vec3 <- c('2', '3,45', '?', '-7.28')

vec4 <- c('barrel: 432.7$', 'liter: 15.42PLN', 'gallon costs approx 32.1SEK', 'sunny, wind gusts up till 55m/s')

vec5 <- "25 Dec 2015"

parse_double(vec1)

parse_integer(vec1)

parse_integer(as.integer(vec1)) # Is it the best way? Hint: rounding.

parse_double(vec2, na = '?', locale = locale(decimal_mark = ','))

parse_number(vec3, na = '?', locale = locale(decimal_mark = '.'))

guess_parser(vec4)

parse_guess(vec4)

# Yes, you can:

parse_number(vec4)

parse_date(vec5, format="%d %b %Y")

parse_date("10_Jul_1410", format="%d%.%b%.%Y")2 NYC flights Challenge

The nycflights13 package contains information about all flights that departed from NYC (i.e., EWR, JFK and LGA) in 2013: 336,776 flights with 16 variables. To help understand what causes delays, it also includes a number of other useful datasets: weather, planes, airports, airlines. We will use it to train working with tibbles and dplyr.

2.1 Task 1: Selecting column

- Load the

nycflights13package (install if necessary). - Read about the data in the package docs.

- Inspect the

flightstibble. - Select all columns but

carrierandarr_time. - Select

carrier,tailnumandorigin. - Hide columns from

daythroughcarrier. - Select all columns that have to do with

arrival (hint:?tidyselect). - Select columns based on a vector

v <- c("arr_time", "sched_arr_time", "arr_delay"). - Rename column

desttodestinationusing:select()andrename()

What is the difference between the two approaches?

Code

install.packages('nycflights13')

library('nycflights13')

?nycflights13

flights

flights %>% select(-carrier, -arr_time)

flights %>% select(carrier, tailnum, origin)

flights %>% select(-(day:carrier))

flights %>% select(contains('arr_')) # or

v <- c("arr_time", "sched_arr_time", "arr_delay")

flights %>% select(v) # ambiguous, or better

flights %>% select(all_of(v))

flights %>% select(destination = dest)

flights %>% rename(destination = dest)

# select keeps only the renamed column while rename returns the whole dataset

# with the column renamed.2.2 Task 2: Filtering rows

- Filter only the flights that arrived ahead of schedule.

Code

flights %>% filter(arr_delay < 0)- Filter the flights that had departure delay between 10 and 33.

Code

flights %>% filter(dep_delay >= 10, dep_delay <= 33) # or

flights %>% filter(between(dep_delay, 10, 33))- Fish out all flights with unknown arrival time.

Code

flights %>% filter(is.na(arr_time))- Retrieve rows 1234:1258 (hint:

?slice).

Code

flights %>% slice(1234:1258)- Sample (

?sample_n()) 3 random flights per day in March.

Code

nycflights13::flights %>% filter(month == 3) %>%

group_by(day) %>%

slice_sample(n = 3)- Show 5 most departure-delayed flights in January per carrier.

Code

nycflights13::flights %>%

filter(month == 1) %>%

group_by(carrier) %>%

slice_max(dep_delay, n = 5)- Retrieve all

unique()routes and sort them by destination.

Code

nycflights13::flights %>%

select(origin, dest) %>%

unique() %>%

arrange(dest)

nycflights13::flights %>%

mutate(route = paste(origin, dest, sep="-")) %>%

select(route) %>%

unique()- Retrieve all

distinct()routes and sort them by destination.

Code

nycflights13::flights %>%

select(origin, dest) %>%

distinct() %>%

arrange(dest)

# or

flights %>%

mutate(route = paste(origin, dest, sep="-")) %>%

distinct(route)- Is

unique()more efficient thandistinct()?

Code

microbenchmark::microbenchmark(

unique = nycflights13::flights %>%

select(origin, dest) %>%

unique() %>%

arrange(dest),

distinct = nycflights13::flights %>%

distinct(origin, dest) %>%

arrange(dest),

times = 10L

)

# Distinct is faster.2.3 Task 3: Trans(mutations)

air_timeis the amount of time in minutes spent in the air. Add a new columnair_spdthat will contain aircraft’s airspeed in mph.- As above, but keep only the new

air_spdvariable. - Use

rownames_to_column()onmtcarsto add car model as an extra column.

Code

flights %>% mutate(air_spd = distance/(air_time / 60))

flights %>% transmute(air_spd = distance/(air_time / 60))

mtcars %>% rownames_to_column('model')2.4 Task 4: Groups and counts

- Use

group_by(),summarise()andn()to see how many planes were delayed (departure) every month.

Code

flights %>%

filter(dep_delay > 0) %>%

group_by(month) %>%

summarise(num_dep_delayed = n())- Do the same but using

tally()andcount().

Code

flights %>%

filter(dep_delay > 0) %>%

group_by(month) %>%

tally()

flights %>%

filter(dep_delay > 0) %>%

count(month)- What was the mean

dep_delayper month?

Code

flights %>%

group_by(month) %>%

summarise(mean_dep_delay = mean(dep_delay, na.rm = T))- Count the number of incoming delayed flights from each unique origin and sort origins by this count (descending).

Code

flights %>%

filter(arr_delay > 0) %>%

group_by(origin) %>%

summarise(cnt = n()) %>%

arrange(desc(cnt))- Do the same using

tally()

Code

flights %>%

filter(arr_delay > 0) %>%

group_by(origin) %>%

tally(sort = T)- Use

summarise()to sum totaldep_delayper month in hours.

Code

flights %>%

group_by(month) %>%

summarize(tot_dep_delay = sum(dep_delay/60, na.rm = T))- Use the

wtparameter ofcount()(works withtally()too) to achieve the same.

Code

flights %>%

group_by(month) %>%

count(wt = dep_delay/60)- Run

group_size()oncarrierwhat does it return?

Code

flights %>%

group_by(carrier) %>%

group_size()- Use

n_groups()to check the number of unique origin-carrier pairs.

Code

flights %>%

group_by(carrier) %>%

n_groups()ungroup

Depending on the version of dplyr, you may or may need to use the ungroup() if you want to group your data on some other variables. In the newer versions, summarise and mutate drop one aggregation level.

flights %>%

group_by(origin) %>%

mutate(mean_delay_orig = (mean(dep_delay, na.rm = T) + mean(arr_delay, na.rm = T)) / 2) %>%

ungroup() %>%

group_by(carrier) %>%

mutate(mean_delay_carr = (mean(dep_delay, na.rm = T) + mean(arr_delay, na.rm = T)) / 2) %>%

select(origin, carrier, mean_delay_orig, mean_delay_carr)2.5 Task 5: Joins

Given the following tibbles set1 and set2:

set1 <- tribble(

~id, ~color,

'id1', 'grey',

'id1', 'red',

'id2', 'green',

'id3', 'blue'

)

set2 <- tribble(

~id, ~size,

'id2', 'XL',

'id3', 'M',

'id4', 'M'

)

set1

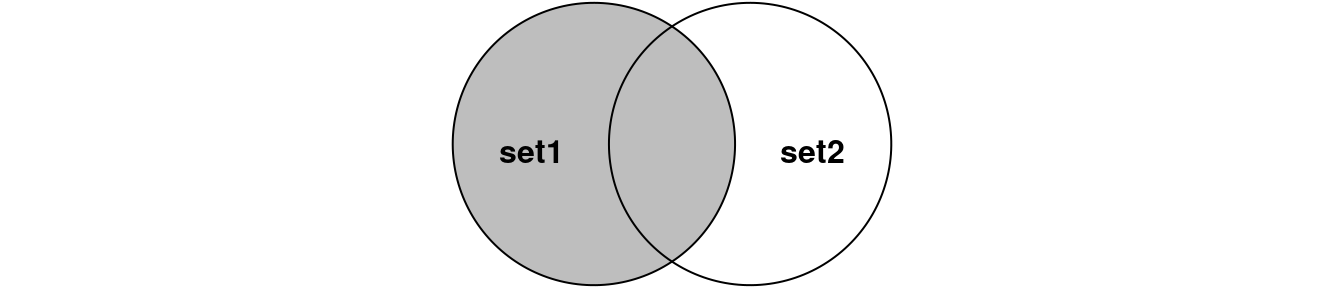

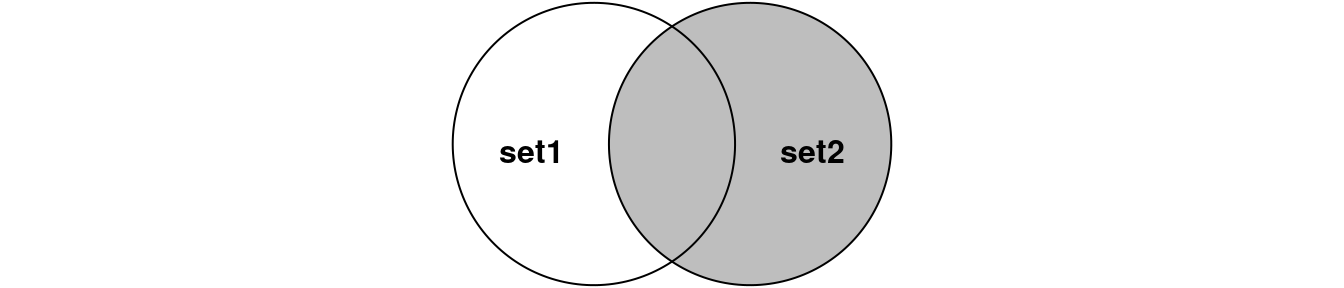

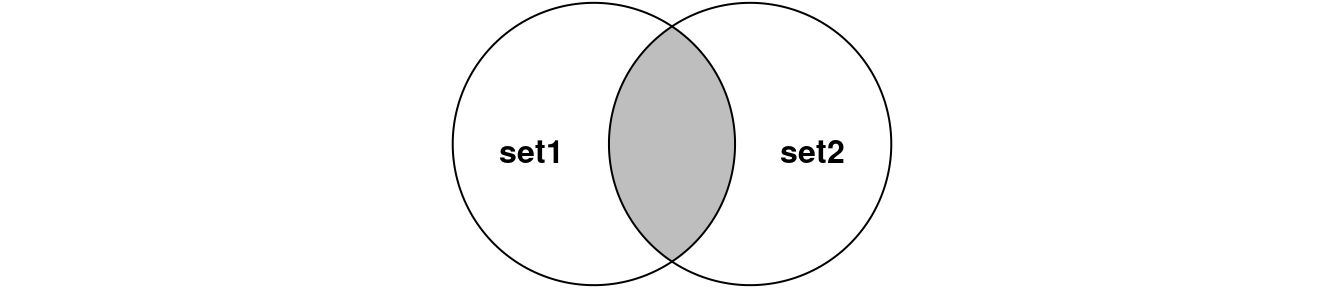

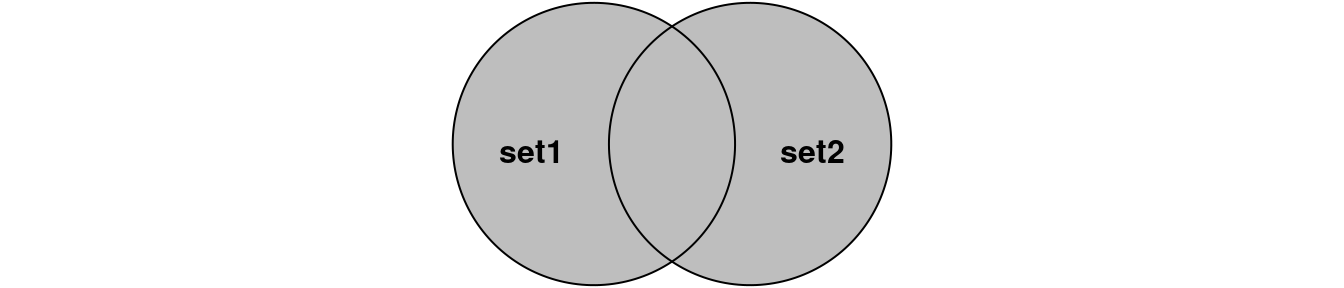

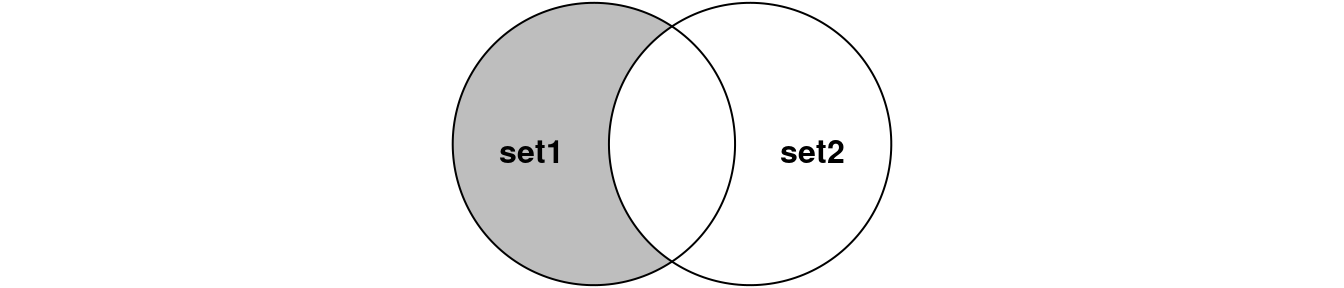

set2Perform joins on id that result in the grey area from the Venn diagrams below. We have not talked about all possible joins, so read the docs if you do not know which join to use.

Code

left_join(set1, set2, by = 'id')Code

right_join(set1, set2, by = 'id')Code

inner_join(set1, set2, by = 'id') # or

semi_join(set1, set2, by = 'id') # semi_join removes duplicates in x

# and also returns only columns from x.Code

full_join(set1, set2, by = 'id') # orCode

anti_join(set1, set2, by = 'id')3 Tidying data

Now time to do some data tidying. First install a package with some untidy data:

#renv::install("rstudio/EDAWR")

library(EDAWR)- Tidy

casesso that years are not in separate columns, but in the column calledyearcontaining a value per each year.

Code

tidy_cases <- cases %>%

pivot_longer(-country, names_to = "year", values_to = "count")- Now time for the

pollutiondataset. Tidy it so that there separate columns forlargeandsmallpollution values.

Code

tidy_pollution <- pollution %>%

pivot_wider(city, names_from = size, values_from = amount)- The

stormsdataset contains thedatecolumn. Make it into 3 columns:year,monthandday. Store the result astidy_storms.

Code

tidy_storms <- storms %>%

separate(col = date,

into = c("year", "month", "day"),

sep = "-")- Now, merge

year,monthanddayintidy_stormsinto adatecolumn again but in the “DD/MM/YYYY” format.

Code

tidy_storms %>% unite(col = "date", 4:6, sep = "/")4 Nanopore Channel Activity Challenge

4.1 Introduction

You will be given a fastq file coming from Oxford Nanopore MinION sequencer This file contains test reads from the chicken genome. The flow-cell used here has 512 channels, each channel consists of 4 pores and only one pore is active at a time. Once your sequence gets stuck for some reason, the device will attempt to remove it from the pore by playing with reversing polarity on that pore. If this was successful, the pore will be re-used. Your task will be to visualize reading events from the meta-data in the fastq dataset and to see how each and every channel behaved. Also, you will plot the distribution of reading times.

Datasets are available here.

4.2 Preparations

First, we will need to load the necessary libraries. I will give you a hint – you need the following libraries:

here– not necessary, but it is an elegant way of reading the data locally from the project folder,tidyverse– well, quite obvious why,ShortReadfrom Bioconductor – to deal with short reads infastq,lubridate– to figure out reading times.

library(here)

library(tidyverse)

#BiocManager::install("ShortRead")

library(ShortRead)

library(lubridate)4.3 Reading data

Now, let’s read the fastq data. Check ShortRead documentation to see how to read our fastq file. Also, try to use package here. If you write: data <- here::here('data/my.fastq'), the my.fastq file will be read from the data folder which is a sub folder of your project root, i.e. the folder where your r script is. It is a good practice and also prevents Jenny Bryan from coming to your office and setting your computer on fire.

Now think a bit, to plot reading events, do we need all the data in the file or only some specific part? You may want to see some few first lines of the fastq to learn about the data structure.

Download fastq file.

raw_data <- "assets/FUL1_fastqs_GRID2.fastq"

# if file doesn't exist, download and uncompress it

if (!file.exists(raw_data)) {

library(googledrive)

drive_deauth()

drive_user()

drive_download(

drive_get(as_id("1FCKf19JtiI-OJMKLKHfsC2X2TMuwddzJ")),

path = "assets/FUL1_fastqs_GRID2.fastq.gz",

overwrite = TRUE

)

R.utils::gunzip("assets/FUL1_fastqs_GRID2.fastq.gz")

}Read fastq file.

f <- ShortRead::FastqFile(raw_data)

rfq <- ShortRead::readFastq(f)

headers <- rfq@id

close(f)4.4 Extracting information you need

In this step, we are extracting data from fastq headers of each and every read in the fastq file. Not super efficient and perhaps the slowest step of the whole analyses. Can you do it better than our example solution?

Desired output: a table (tibble/data.frame) with reads as rows and meta-data as columns.

We will use the stringr package for string manipulation. Use str_split() to explode string data into columns and str_remove_all() to get rid of unwanted characters.

- To split string on a particular character, group of characters use

str_split. Here we split on comma.

text <- "This text is long, or not?"

str_split(text, ',')- To remove everything following a given character, e.g. comma:

str_remove_all(text, ",.*")Code

data <- dplyr::as_tibble(matrix(NA_character_, ncol = 6, nrow = length(headers)), .name_repair = 'minimal')

colnames(data) <- c('id', 'run_id', 'sample_id', 'read', 'channel', 'start_time')

for (i in 1:length(headers)) {

data[i,] <- toString(headers[[i]]) %>%

strsplit(' ') %>%

unlist() %>%

str_remove_all(".*=") %>% t()

}4.5 Preparing tidy dataset

Now, the fun part begins:

- Add column

start_dttmthat represents start time for a given read as properdatetimeobject (readlubridatedocs) and - Add column

chanthat is the proper numeric representation of the channel, - Group reads by channel,

- Arrange them by time,

- Add time to next read (NA if this was the last read) and

- Sort by channel again.

Read about lead()

Code

data2 <- data %>%

mutate(start_dttm = as_datetime(start_time)) %>%

mutate(chan=as.numeric(channel)) %>%

group_by(chan) %>%

arrange(start_dttm) %>%

mutate(time_diff = lead(start_dttm) - start_dttm) %>%

arrange(chan)4.6 Plotting events per channel

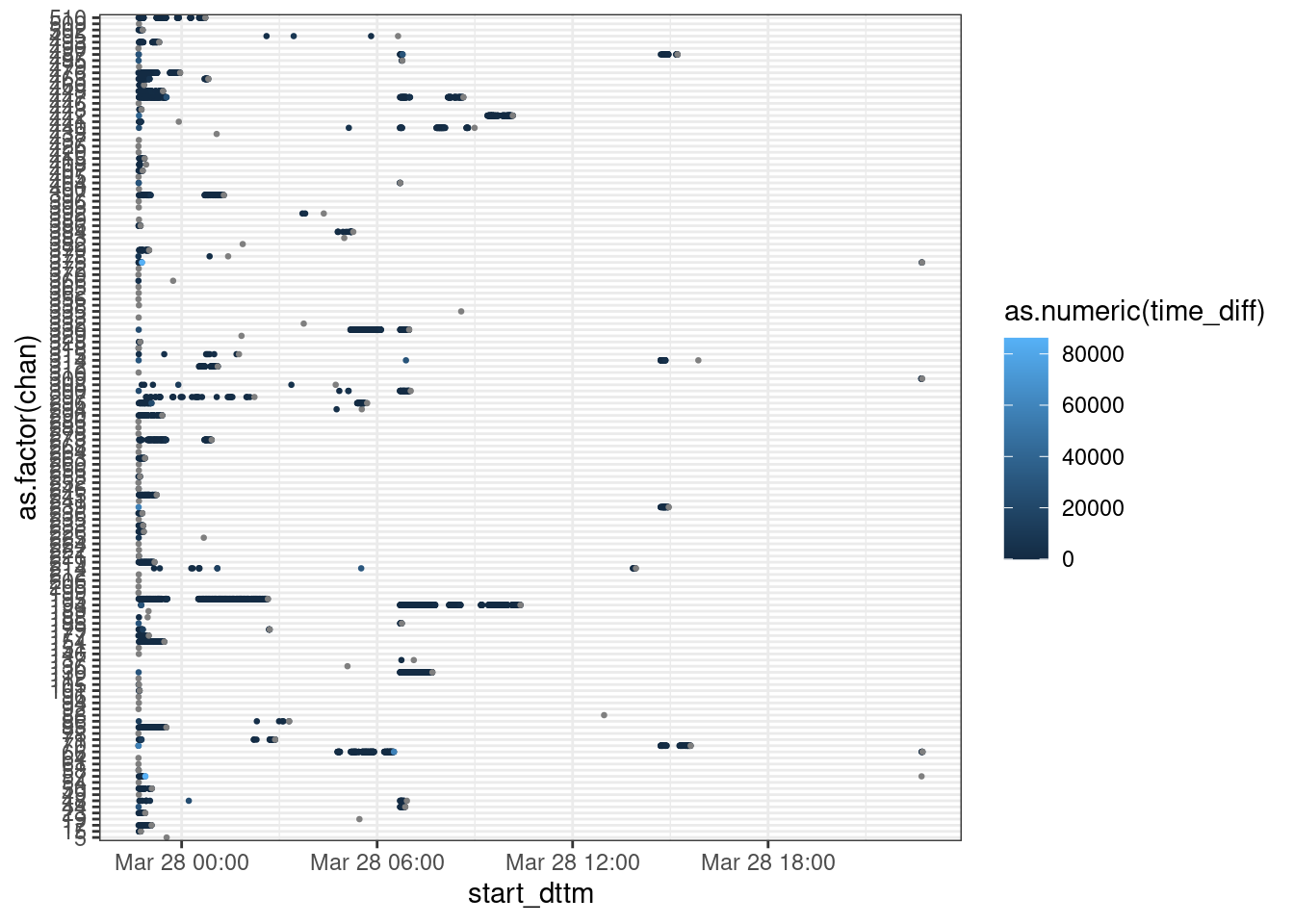

Here, we want to see what was happening in each channel over time. Plot the data you have just prepared so that:

- Each point is the start of a new read,

- Colour corresponds to the lag to the next read.

Can you visualize this in a better way? Different geometry?

4.7 Distribution of time intervals

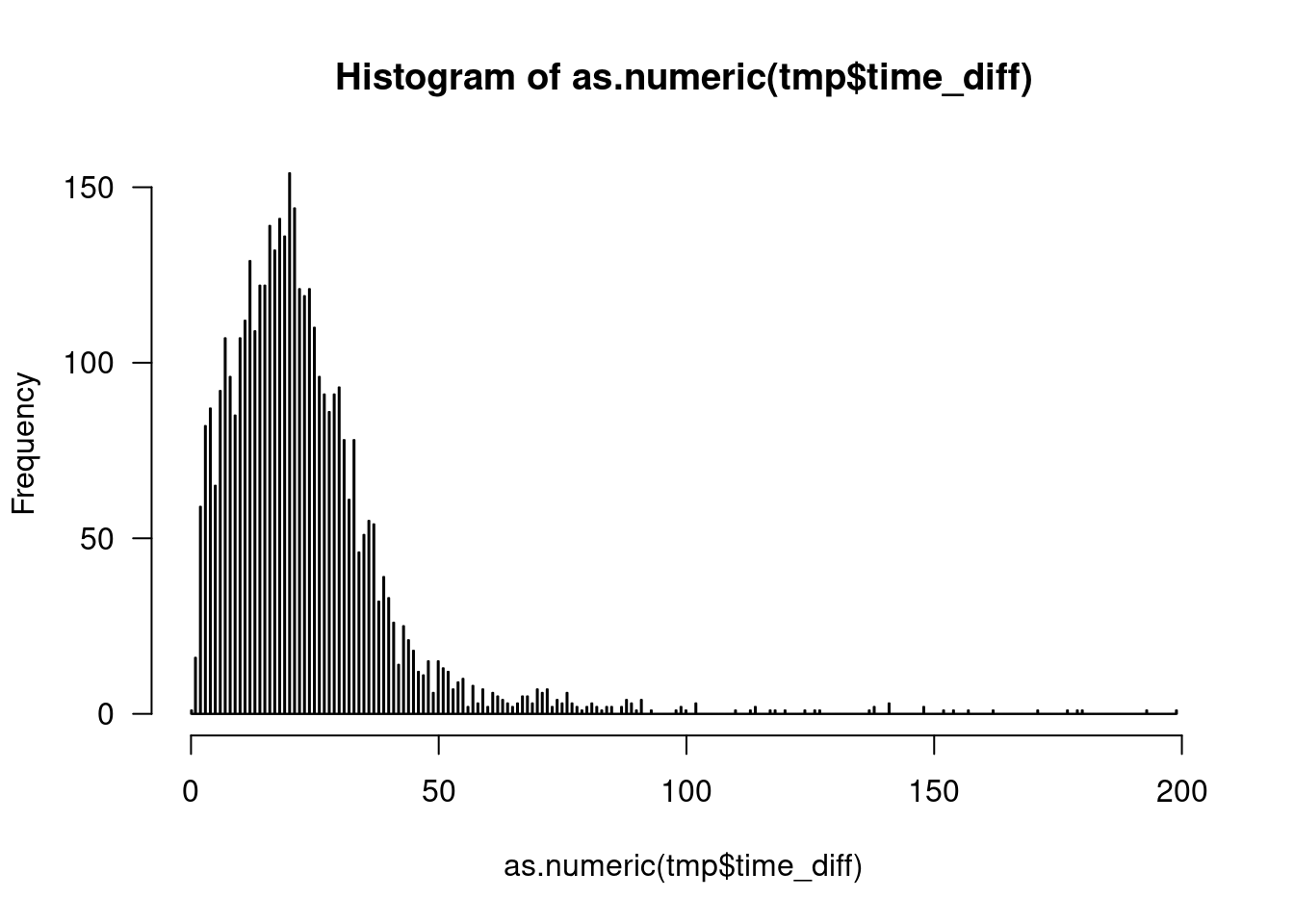

Now, we want to see how time-to-next-read is distributed. Since it has a veeeeery long right tail, I am cutting off everything above 200 seconds (just by eyeballing).

- Plot time-to-next-read is distribution (you can use base-R

histogram), - Can you find a better cutoff value?

5 Species Identification Challenge

In this challenge, your task will be to analyze species composition of some samples. The samples, were actual products containing parts of plants. DNA has been isolated form the samples and an amplicon metabarcoding was performed using two sets of primers: for the ITS1 and the ITS2 region. Each sample had 3 technical replicates. Your task will be to transform BLAST output to a tidy form suitable for further analyses or visualization.

5.1 Load necessary libraries

We will obviously need tidyverse, we will also do some string manipulations with stringr also here package is good to have.

library(tidyverse)

library(stringr)

library(here)5.2 Input variables

Here, we will define our input variables. We need:

filethat contains the path to the dataset,sample_nameis a string, the name of the sample you want to analyze,thresholdis an integer saying what is a the minimal number of replicates that have to contain an OTU in order to call it a true positive (TP),stricta logical. If set to TRUE, only the OTUs deemed TP will be shown.

Below we set some example values:

# Change the path to your project path, where your data is

file <- here::here("docs/tidyverse_Marcin/lab/assets/blast_result.csv")

sample_name <- 'SAMPLE12'

threshold <- 1

strict <- F5.3 Reading the data

Now, you should read the data:

Code

species_orig <- read_csv(file, col_names = c("sample","its","replicate","OTU","size","hit","perc_ident","score","family","species")) %>%

select(-score)

head(species_orig,n = 10)As you see, the following information are included in the data:

sampleis simply the name of the sample,itsis either ITS1 or ITS2 and tells which set of PCR primers has been used,replicatecontains information on which replicate the sequences come from,OTUis a unique identifier of the so-called Operational Taxonomic Unit, an OTU often corresponds to one species but not always. Sometimes 2 OTUs represent the same species, sometimes 1 OTU consists of more than one species,sizeis the number of reads that support that particular OTU,hitis the BLAST hit identifier. The 4 top BLAST hits are reported per OTU,perc_identityis the percentage identity of the sequence to the BLAST hit,familyis the identified plant family,speciesis the identified plant species.

5.4 Number of replicates per species

Create a new dataset species that contains an extra column n_replicates. The column contains number of replicates this particular species is present in. Do it per sample and its.

Code

species <- species_orig %>%

group_by(sample, its, species) %>%

mutate(n_replicates = n_distinct(replicate)) %>%

ungroup()

head(species,n = 10)5.5 Filter out unwanted samples

Now, your task is to filter out all but your sample_name samples from the dataset. Call the resulting dataset my_sample.

Code

my_sample <- species %>%

filter(sample == sample_name)5.6 Missing observations

What happens if a set of primers failed to amplify or if one replicate was lost? Use complete() to make sure you have NA values in such cases.

Code

my_sample <- my_sample %>%

complete(its = c("ITS1", "ITS2"),

replicate = c("R1","R2","R3"))5.7 Sorting issue

Look, the first sample in the table is SAMPLE10. Why not SAMPLE1? That’s a sorting issue: if sorted as character, 10 will come before 1. WE have to fix this by adding trailing zero to the values in OTU. We do not expect more than 99 OTUs in a sample, so it is ok with only one trailing 0 (otherwise the 100th sample will spoil our sorting and come out like: SAMPLE100, SAMPLE01, SAMPLE10). We will need to use regular expression:

- All values in the

OTUcolumn that follow pattern “OTUdigit” we need to change to “OTU0digit”. Regular expression that matches this isOTU([0-9]$)and it should be replaced by:OTU0\\1. Ask your TAs to explain this if you do not know much about regular expressions and pattern matching.

my_sample <- my_sample %>%

mutate(OTU = str_replace(OTU,pattern = "OTU([0-9]$)",

replacement = "OTU0\\1"))

head(my_sample,n = 10)5.8 Supporting reads

Sometimes, an OTU generates two or more top BLAST hits that come from the same species. We have decided to sum reads in such cases. Do it!

Code

my_sample <- my_sample %>%

ungroup() %>%

group_by(sample, its, replicate, OTU, species, n_replicates) %>%

summarise(n_reads = sum(size)) %>%

ungroup() %>%

group_by(its, species, OTU)

head(my_sample,n=10)5.9 Within-OTU species diversity

Now, we want to see how many identifications an OTU got. Implement this. Store the result in a new tibble diversity.

Code

diversity <- my_sample %>%

ungroup() %>%

group_by(its, replicate, OTU) %>%

summarise(n_species = n())

head(diversity,n=10)5.10 Adding diversity data

Add the diversity data to my_sample using appropriate join function. Also, remove the column with sample names since we are dealing with only one sample.

Code

my_sample <- my_sample %>%

left_join(diversity) %>%

select(-sample)

head(my_sample,n=10)- What columns did we join on in our example solution?

5.11 Visualising the data

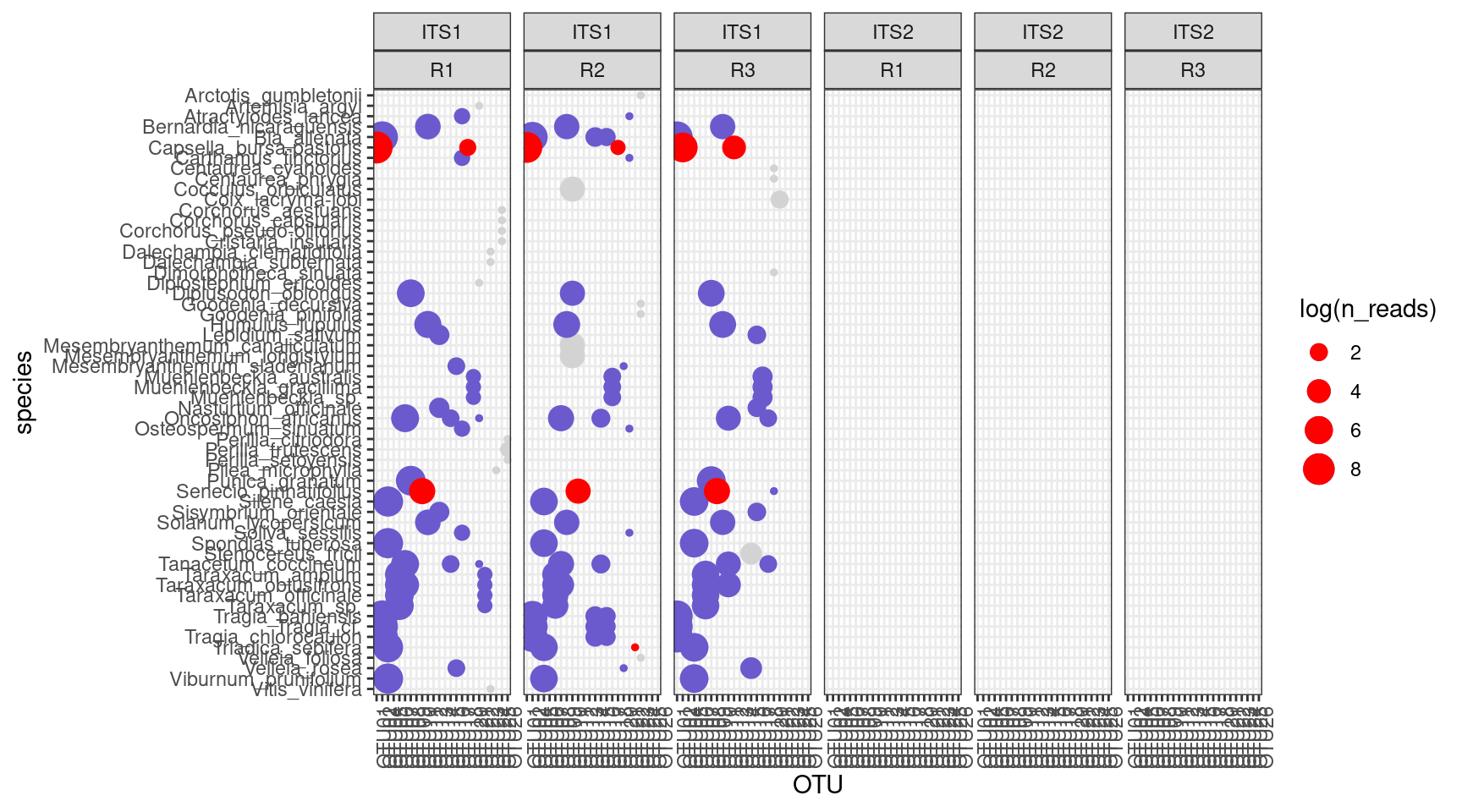

Can you think of a good way of visualizing the data? Think of:

- Type of plot you want. The simpler the better?

- Which variables you would like to visualize? We have chosen

ITS,replicate,n_reads,n_replicates,OTU,threshold,n_speciesandspecie. Well, pretty much all of them :-) - How do you represent variables: colors, shapes, separate plots, sizes?

- What transformations do you need to apply before visualizing the data?

Our example solution involves some ggplot2 magics. But would base-R be good enough for this type of plot?

6 Wildlife Aircraft Strikes Challenge

Use the FAA report and tidyverse to learn more about aircraft incidents with wildlife. Use your imagination and NYC data science blog for inspiration!