Good practices of NN/DL project design

NBIS

08-May-2026

Good practices of NN/DL project design

What to do and - more importantly perhaps - not to do

Is my project right for Neural Networks?

The thought process should not be: “I have some data, why don’t we try neural networks”

But it should be: “Given the problem, does it make sense to use neural networks?”

- Do I really need non-linear modelling?

- What literature is out there for similar problems?

- How much data will I be able to gather or put my hands on?

- Are there datasets out there that I can re-use before I collect my data?

Do I really need non-linear modelling?

- Sometimes linear methods perform just as well if not better

- Less risk of catastrophic overfitting

- Faster to code, optimize, run, debug

- Use linear modelling as a baseline before you move to non-linear methods?

Real-life example

Question from group leader: “I tried deep learning on my data and it didn’t perform better than this other simpler method”

- Classifying gene expression samples

- Thousands features

- 1000 samples

- 2 classes

- NN looked like this:

Parameters (weights) vs. samples

- A 2-hidden layer FFNN can perfectly store O(Q^2) samples with Q hidden nodes (ref)

- If the number of parameters is many times higher than the number of samples a NN will never work

- Ideally, we are looking for the inverse: way more samples than parameters

- Some rules of thumb out there:

- Definitely bad if number of weights > number of samples

- 10x as many labelled samples as there are weights

- A few thousand samples per class

- Just try it and downscale/regularize until you’re not overfitting anymore (or until you have a linear model)

And even if I have enough data for a NN…

… is Deep Learning the right choice?

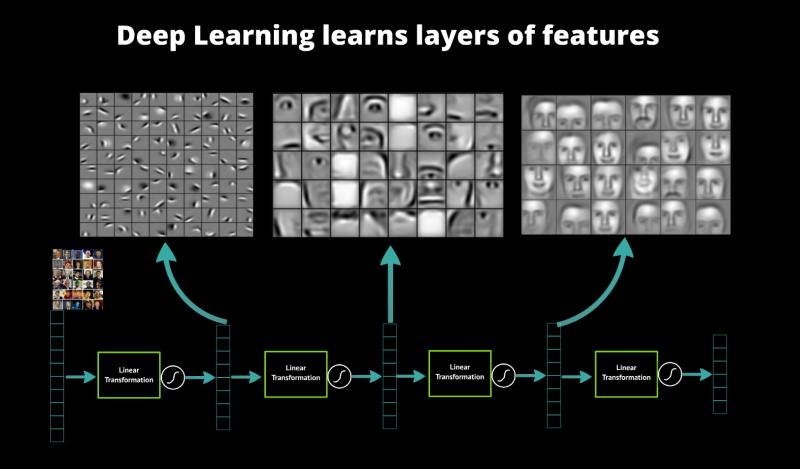

- The tasks were Deep Learning shine are those that require feature extraction:

- Imaging -> edge/object detection

- Audio/text -> sound/word/sentence detection

- Protein structure prediction -> mutation patterns/local structure/global structure

- Deep Learning makes feature extraction automatic and seem to work best when there is a hierarchy to these features

- Is your data made that way?

- Does it have an order (spatial/temporal)?

- Are smaller patterns going to form higher-order patterns?

- All these different types of layers need to be there for a reason

source: datarobot

And even when both these conditions have been met

… you need a few more things:

- Domain knowledge is not enough

- Sometimes people with NN/DL knowledge and no domain knowledge end up being the right ones for the job (see Alphafold)

- You also need lots of patience and time, these things rarely work out of the box

A few more things to keep in mind

- You need extensive knowledge of your data:

- Split the data in a rigorous way to avoid introducing biases

- Check for information leakage before you get overly optimistic results

- Make sure that there are no errors in your data

And therein lies the main issue: * Some think that DL is about having a model magically fixing your data * Reality: your network will be as good as your data at best

Neural Nets are very good at detecting patterns and they will use this against you

(a.k.a. target leakage)

Target leakage

- Making a predictor when you know the answers is not as easy as it seems

- Need to remove any revealing info you would not have access to in real scenario

- Classic example: predict yearly salary of employee

- But one of the features is “monthly income”

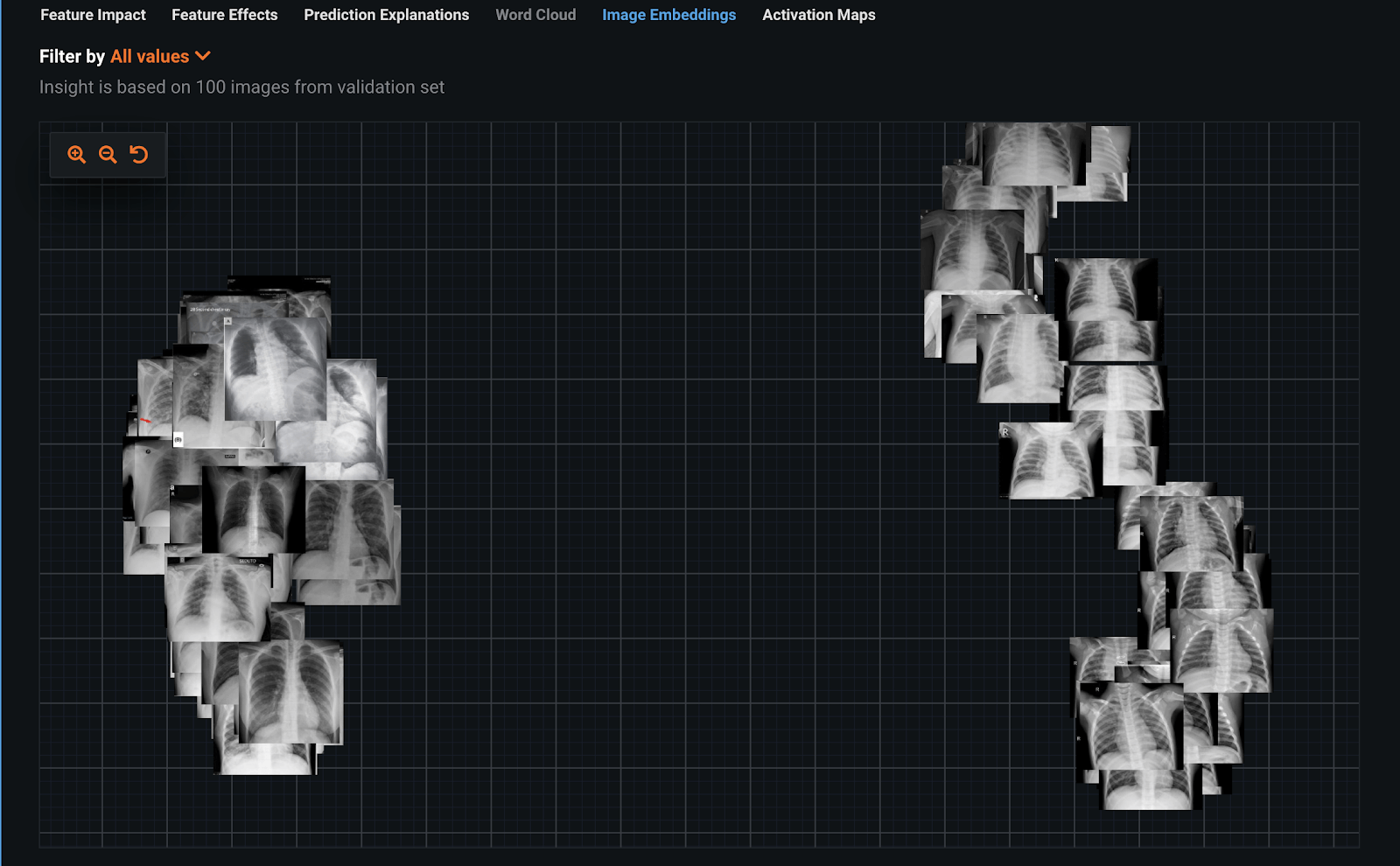

Example: detecting COVID-19 from chest scans

(https://www.datarobot.com/blog/identifying-leakage-in-computer-vision-on-medical-images/)

- COVIDx dataset

- Training set: chest X-rays of 66 positive COVID results, 120 random non-COVID examples

- 2-class classifier based on ResNet50 Featurizer

- Perfect validation/development results! Great!

Example: detecting COVID-19 from chest scans

Inspecting dataset with image embeddings tells another story: can anyone tell what’s wrong?

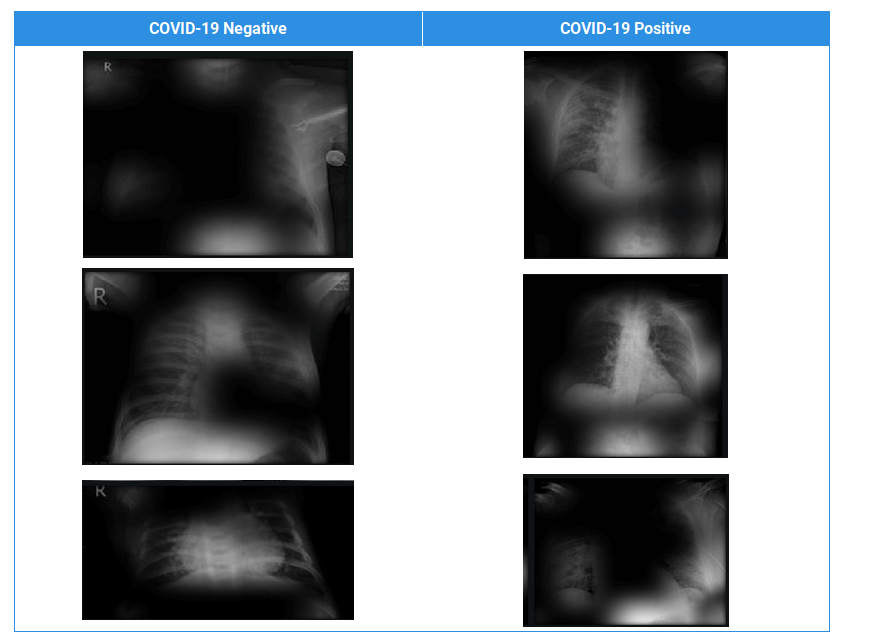

Example: detecting COVID-19 from chest scans

Let’s look at activations map and see more in detail

- Get final layer’s output after activation (ReLU) and plot back on input

Example: normalizing inputs on train/development/test data

- If you normalize on development and test data as well you are getting information you wouldn’t have in a real scenario

Lab: looking for target leakage in a text dataset (~1 h.)

Jupyter notebook (good_practices/labs/target_leakage/investigating_target_leakage.ipynb)

Visualize the layers of a NN for Natural Language Processing:

- Can you tell if there is target leakage of some kind?

- Propose solutions to curb the issue

Know your train/development/test sets

- A train set is a set of samples used to tune the NN weights

- A validation or development set is a set used to tune the NN hyperparameters:

- Type of model (maybe not even a NN)

- Number of layers

- Number of neurons per layer

- Type of layers

- Optimizer

- Development set results are NOT the ones that will get published

- Doesn’t matter if you cross-validate

- A test set is a secluded set of samples that are used only once to test the final model

- Give an idea of how well the model generalizes to unseen data (results go on paper)

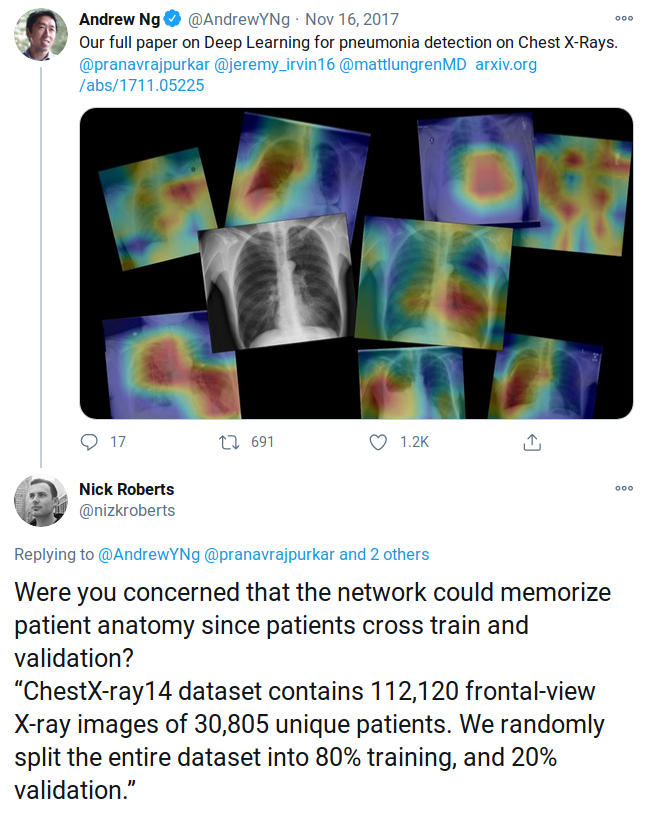

Beware of similar samples across sets

(2F08 “Fear of Flying”)

(2F08 “Fear of Flying”)

Knowing what each set does is half the battle

Train, development and test sets cannot be too similar to each other, or you will not be able to tell if the network is generalizing or just memorizing

- How different they should be depends on what you’re trying to achieve

- Come up with a similarity measure

- At the very least remove duplicate samples

- You would be surprised how often scientists mess this up

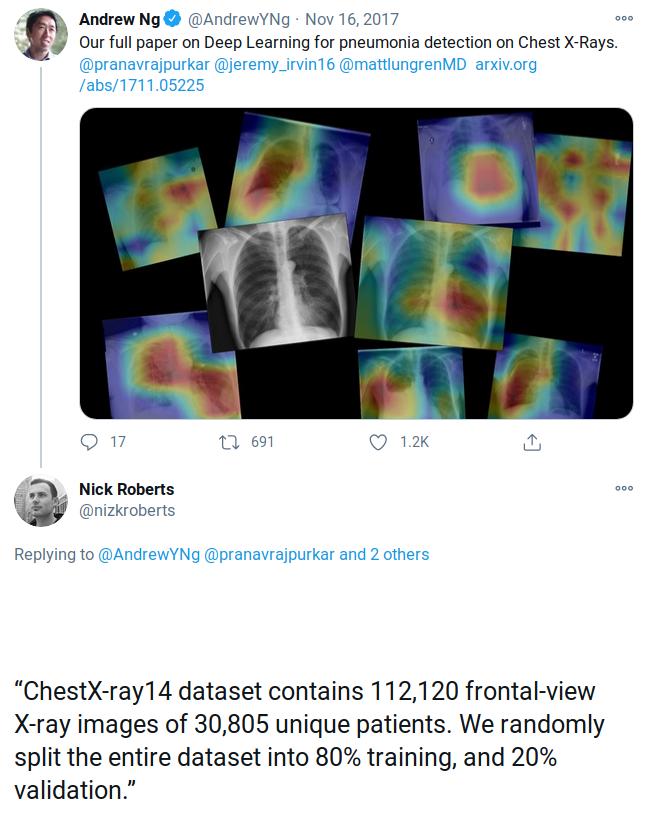

Another imaging example

Another imaging example

Another imaging example

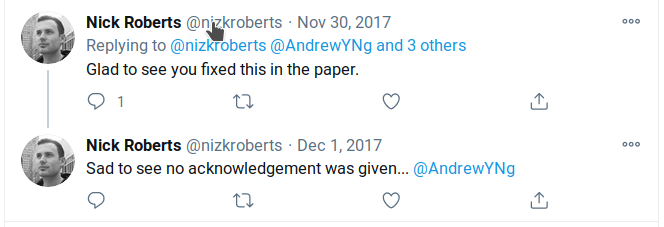

Sad ending :(

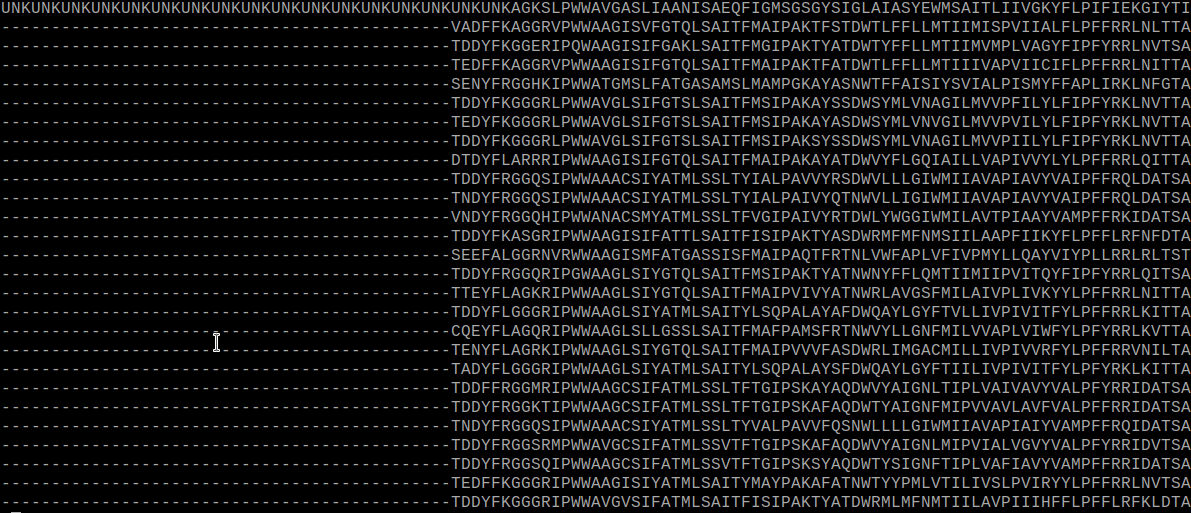

Another example, protein structure prediction

- For some reason most researchers try to split train/development/test by sequence similarity

- If two proteins have <25% identical amino acids, they are deemed different enough

- But protein families/superfamilies contain many proteins that share no detectable sequence similarity

- Sequence similarity is not the right metric!

Lab: splitting a protein sequence dataset (~1 h.)

Jupyter notebook:

good_practices/labs/data_splits//rigorous_train_validation_splitting.ipynb

Two different strategies will be tested: * Random split * Split by alignment score

Which works best? Different groups test different networks on each strategy

Your model is only as good as your data

Reasons why one of my networks wouldn’t work:

- Labels were wrong (label for amino acid n was assigned to amino acid n+1)

- The actual target sequence was missing from the multiple sequence alignment

- Inputs weren’t correctly scaled/normalized

- Script to convert 3-letter code amino acid to one letter (LYS -> K) didn’t work as expected

NNs are robust

They will “kind of” work even when some labels are incorrect, but it is going to be very tricky to understand if and what is wrong

- Before training:

- Plot data distributions

- Test all data preparation scripts

- Manually look at data files

- Check labels for mistakes, unbalancedness

- While training:

- Look at badly predicted samples

- Be paranoid when something doesn’t work well, even more when it works surpisingly well

My data is perfect but I don’t have enough of it: what now?

Main avenues: * Find more of it * Make smaller models * Cut down insignificant features * Generate artificial samples: Data augmentation * Transfer learning (so find more data, again) * Think outside the (black) box

Feature selection

- We are moving away from Deep Learning (automatic feature extraction from raw data)

- Remove highly correlated inputs first, that’s easy

- Keep in mind that categorical inputs are more “costly” in terms of parameters

- E.g. a 10-category input will be encoded as 10 separate inputs (one-hot)

- Feature ablation studies

- Autoencoders to compress inputs?

- Feature importance through other ML methods:

- Random Forest

- Logistic regression

Feature ablation

- Ablation study (on features):

- Remove parts of the inputs, see what happens

- If results improve, remove some other inputs

- If not, try removing other inputs and so on

- Could be implemented in annealing procedure to speed things up

- As usual, do this only on training data

Regularizers

You thought we were done with PyTorch api explanations, but we’re not!

- Regularizers are used to constrain the training so that weights don’t get too big (a cause of overfitting)

- L1 regularization (Lasso):

- $L_r(x,y) = L(x,y) + {i,j} w{i,j}$

- Results in sparse weight matrices (many weights to 0)

- L2 regularization (Ridge):

- $L_r(x,y) = L(x,y) + {i,j} w{i,j}^2 $

- Results in smaller weights

- Let’s have a look on tensorflow playground!

Data augmentation

- We have already seen it in action during the Convolutional lab

- If we have few images, we can flip them, rotate them, shift them…

- Extra instances that the network will benefit from

- Pixel-wise classification was also a form of augmentation!

- 100 images of size (640x480) suddenly become 100x640x480 labelled samples

- Extreme examples: generative neural networks (see VAE from yesterday)

- Similar things might be done to other kinds of data as well

- Can you imagine ways to augment the data you work with?

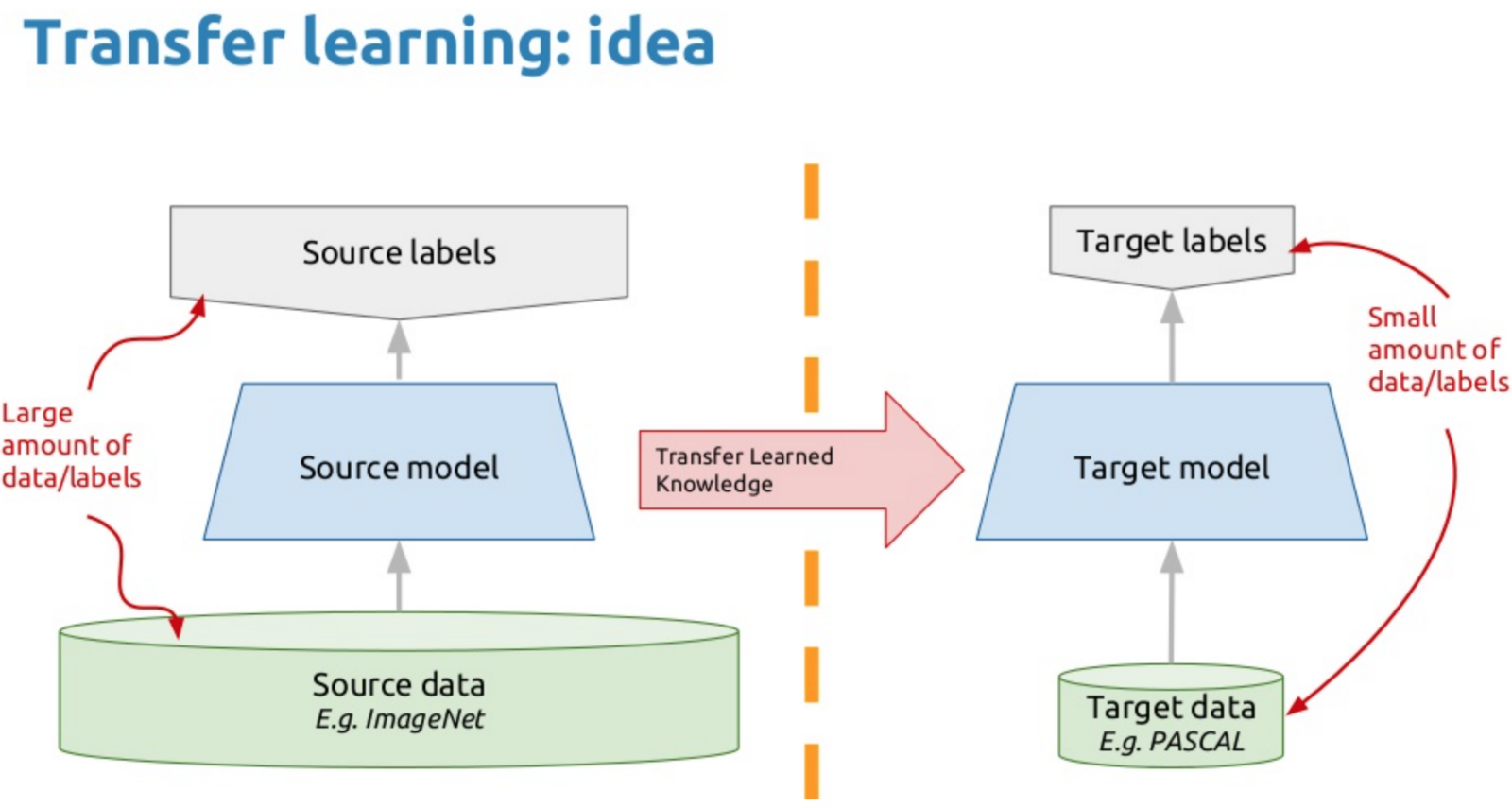

Transfer learning

- Say you have a small labelled dataset for a specific problem

- But there are larger datasets out there for similar applications

- Transfer learning means training a large Neural Network on the large set, then use parts of it on the small set

Transfer learning

- Train a deep classifier on a large dataset

- The bottom (first) layers of the network learn to extract relevant features

- The top (last) layers learn to classify

- Keep the bottom layers, freeze them (so that the weight can’t change anymore)

- Re-initialize the top layers weights randomly

- Retrain the network on the small dataset so that only the top layers weights are now trained

Good Practices of Project Design